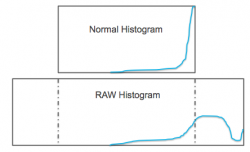

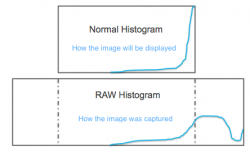

I understand the histogram... it's a visual representation of the distribution of pixels at different brightness levels.

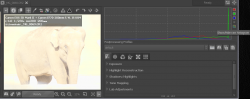

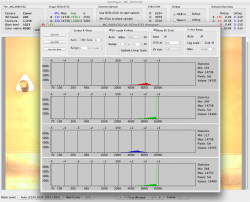

In this photo example (borrowed from another forum), the histogram accurately represents what is being displayed and therefore you would assume what the sensor on the camera captured. You would think the highlights are blown and unrecoverable - they're all pegged to the right - the brightest white your screen can display.

However, after adjusting the exposure etc., you can easily get a picture and histogram that displays nicely (see the other image). So, information you would think was pegged or clipped, was not in fact clipped.

So while the histogram is an accurate representation of what is displayed, it's not an accurate representation of what data is there and what is actually clipped... is it?

If not, then why don't we have the tools at our disposal to tell when image data is truly clipped vs being displayed as clipped?

Or am I confused?

In this photo example (borrowed from another forum), the histogram accurately represents what is being displayed and therefore you would assume what the sensor on the camera captured. You would think the highlights are blown and unrecoverable - they're all pegged to the right - the brightest white your screen can display.

However, after adjusting the exposure etc., you can easily get a picture and histogram that displays nicely (see the other image). So, information you would think was pegged or clipped, was not in fact clipped.

So while the histogram is an accurate representation of what is displayed, it's not an accurate representation of what data is there and what is actually clipped... is it?

If not, then why don't we have the tools at our disposal to tell when image data is truly clipped vs being displayed as clipped?

Or am I confused?