This is what I meant above about differences in perspective being a good thing.

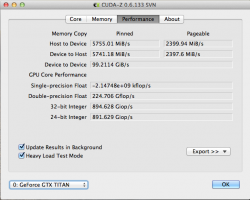

Hey, can you check CUDA-Z?

The single precision number makes no sense.

You're right that it makes no sense. But what does it mean? CUDA-Z needs an update, for the Titan is too powerful. See snapshoots of a set of Titan triplets. Note single and double precision measures. Then compare to the pic of the 690 twins - that's actually just one GTX 690 card. All four cards were in my old EVGA SR-2 system [

http://browser.primatelabs.com/geekbench2/500630 ] at the same time that performance was [attempted to be] measured. This is how they were arrayed from top to bottom in the PCI-e 2.0 slots of my SR-2 - Titan 0, Titan 1, Titan 2, and 690. That number we're getting (an error message for an out of range error [ see also post #564 here:

https://forums.macrumors.com/threads/1333421/ ]) is because the number to be read is too high for CUDA Z to read accurately [and with overclocking, I'm getting the same thing for double precision also for Titans 0 and 2]. Since all three Titans were clocked the same, as a group, in Precision X, we know that for double precision, on each of those two cards I'm getting higher than 2,109.1 Gflop/s or 2.1091 Tflop/s. Why do we know that? CUDA-Z can, at least, read up to the performance level of that slow poke Titan 1 - 2.1091 Tflops. Since slow poke was the middle card towards the top, it reached thermal max sooner than the outer and lower cards. Thus, the other two Titan cards were blazing with my overclock which improved double precision floating point peak performance of slow poke by over 60% higher than that of a $3.5K+ Tesla K20X (1.31 Tflops) [

http://www.nvidia.com/object/tesla-servers.html ]. The point where the error surfaces is probably somewhere between 2.1091 Tflops and 1.02x higher than 2.1091 Tflops (1083 / 1059= 1.02266288951841 - it's the approximate delta in 24/32 bit integer results in Giop/s using that as a proxy since I didn't have anything else to use as a performance measure.) I was running LuxMark when I took these snapshots. I know that the SR-2 is not the best home for GTX Titans and 690s, and that's why my performance is not at its max. But I'll soon place them in a better home [ maybe this one -

http://www.supermicro.com/products/system/4U/7047/SYS-7047GR-TRF.cfm ] that has 4+ PCI-e 3.0 slots, at least 4 of which are X16 for 4 double-wide Titans - namely, Atlas, Prometheus, Epimetheus, and Menoetius. I also recognize that these were not actually the names of the Titans, but the names of the sons of Iapetus (who was a Titan).