With every new version of iOS, Apple makes an effort to provide new privacy and security-focused features to make the iPhone and iPad more secure, and iOS 15 is no exception. It is, in fact, a huge leap forward in privacy thanks to features like iCloud Private Relay and Hide My Email. However, Apple’s recent announcement about the introduction of CSAM scanning on device has led to significant criticisms of Apple’s handling of user privacy going forward.

In this guide, we've outlined all of the privacy and security features that are available in iOS 15 to give MacRumors readers a clear picture of what's new.

iCloud+

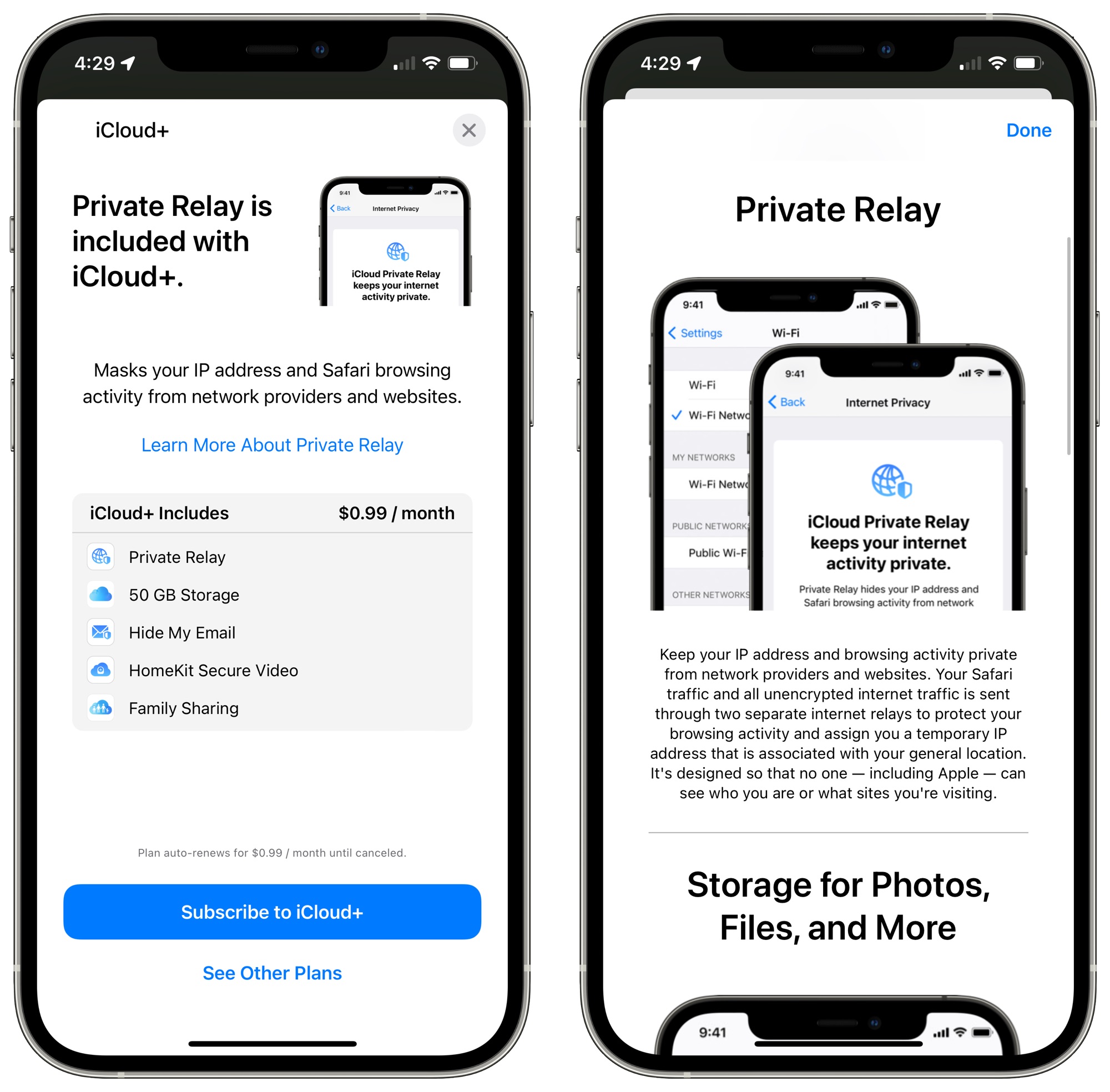

Alongside iOS 15, Apple debuted a new iCloud+ service, which adds additional features to all paid iCloud accounts, which are priced starting at $0.99 per month. Apple offers a $0.99/month iCloud plan that adds 50GB of storage, a $2.99/month iCloud plan that adds 200GB storage, and a $9.99/month iCloud plan that adds 2TB of storage.

All three plans also include the iCloud+ features as an incentive to encourage people to upgrade their iCloud plans. iCloud+ offers iCloud Private Relay, Hide My Email, and support for additional HomeKit Secure Video cameras.

The $0.99 plan supports one HomeKit Secure Video camera, the 200GB plan supports up to five HomeKit Secure Video cameras, and the 2TB plan supports an unlimited number of HomeKit Secure Video cameras. Previously, the 200GB plan supported one camera and the 2TB plan supported five.

There's also a hidden feature for creating a custom email domain name that can be used with iCloud+ as an alternative to an iCloud Mail address. So if you have a website and you'd prefer to use your own custom email address that's a possibility. This feature is not yet available and will presumably be accessible when iOS 15 launches. It's expected to be similar to Microsoft 365 and Google Workspace, which allow for this kind of email address personalization while continuing to use Microsoft and Google email servers.

iCloud+ features are automatically applied to all paid iCloud accounts, including Apple One accounts.

iCloud Private Relay

iCloud Private Relay is a new service that makes sure Safari traffic and other unencrypted traffic leaving an iPhone, iPad, or Mac is encrypted and uses two separate internet relays so that companies cannot use personal information like IP address, location, and browsing activity to create a detailed profile about you.

When you're browsing the web in Safari, for example, websites are not able to see your IP address and location and cannot tie that information to track your browsing across different sites.

iCloud Private Relay is not a Virtual Private Network, or VPN. It works by sending all web traffic to a server that is maintained by Apple where information like IP address is stripped. Once the info is removed, the traffic (your DNS request) is sent to a secondary server that's maintained by a third-party company, where it is assigned a temporary IP address, and then the traffic is sent on to its destination.

By having a two-step process that involves both an Apple server and a third-party server, iCloud Private Relay prevents anyone, including Apple, from determining a user's identity and linking it to the website the user is visiting. Based on testing conducted by Dan Rayburn of the Streaming Media Blog, Apple appears to be working with Akamai, Fastly, and Cloudflare.

In this system, Apple knows your IP address and the third-party partner knows the site you're visiting, and because the information is de-linked, neither Apple nor the partner company has a complete picture of the site you're visiting and your location, and neither does the website you're browsing. Normally websites have access to this data and combined with cookies, can use it to build a profile of your preferences.

With a traditional VPN, you can select an IP address location to use, but that's not the case with iCloud Private Relay. You are limited to your country. Apple says that its two-part relay process is more secure than a VPN, but it's worth noting that it's limited to Safari for web browsing and it does not work for alternate browsers like Chrome.

As outlined on Apple's developer site, Private Relay protects only web browsing in Safari, DNS resolution queries, and insecure http app traffic. There is no complete device-wide protection as might be the case with a VPN.

iCloud Private Relay is subject to local regulatory restrictions and it will not be available in countries that include China, Belarus, Colombia, Egypt, Kazakhstan, Saudi Arabia, South Africa, Turkmenistan, Uganda, and the Philippines.

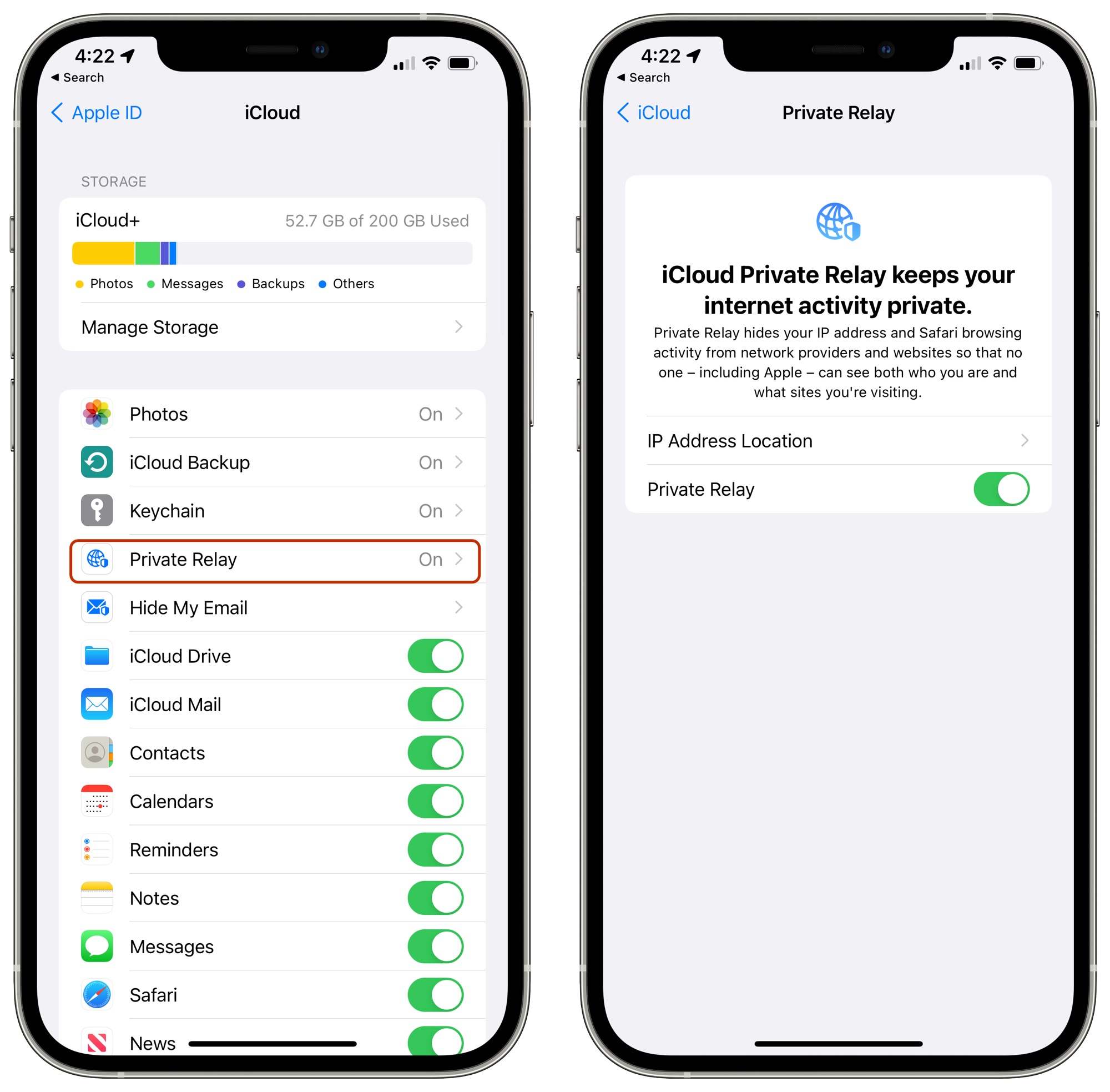

iCloud Private Relay is enabled by default when you upgrade to iOS 15 (or iPadOS 15) but it can be disabled by opening up the Settings app, tapping on your profile, selecting iCloud, and then tapping on the "Private Relay" toggle.

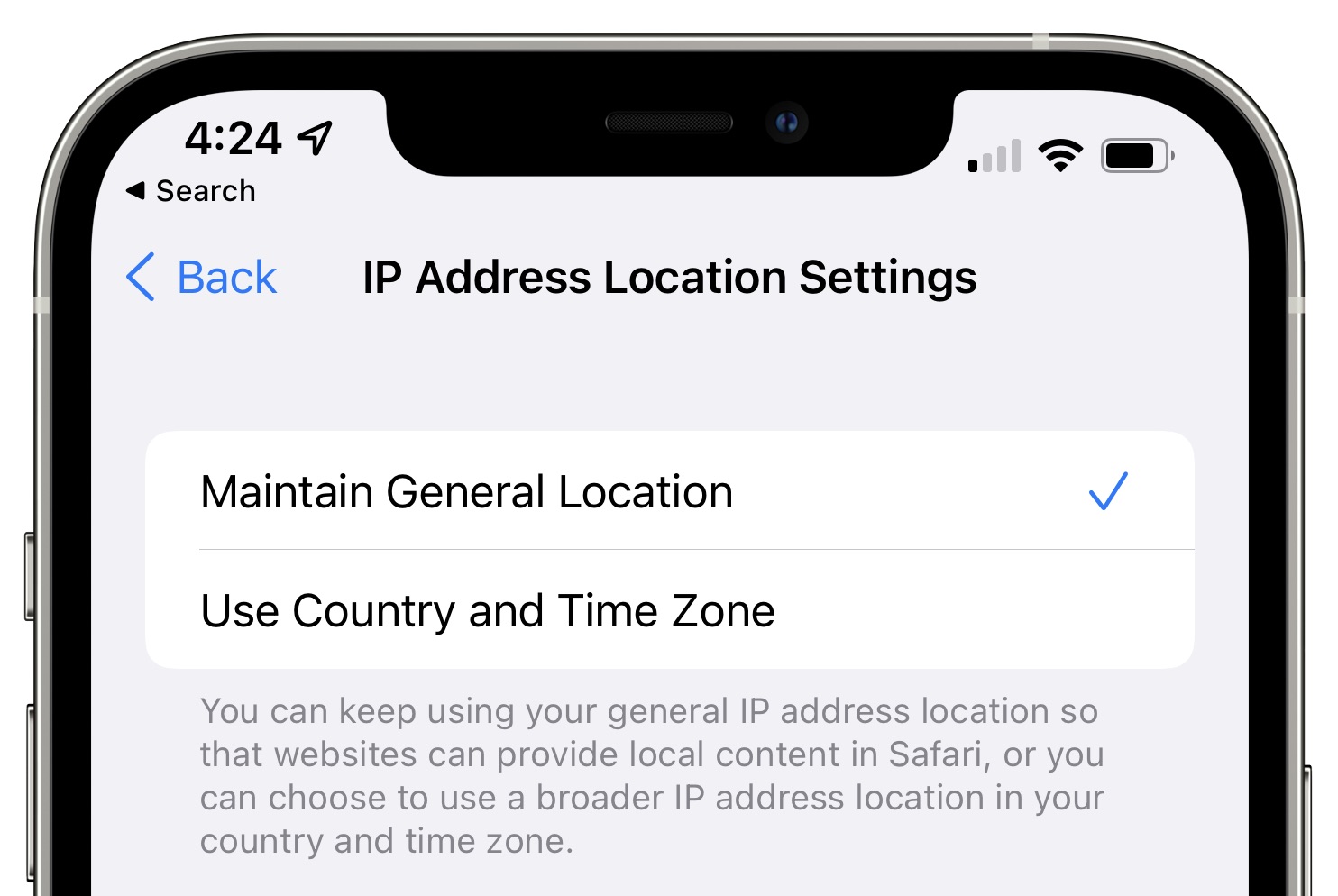

You can choose the IP address location settings for iCloud Private Relay. The "Maintain General Location" option, which is the default, allows websites to provide local content in Safari. The "Use Country and Time Zone" option uses a broader IP address that's only specific to your country and time zone for more privacy.

Under the WiFi and Cellular settings on your device, you can also get quick access to the iCloud Private Relay settings. For WiFi settings, join a WiFi network and then tap on the "i" button to access the iCloud Private Relay toggle. Cellular Network settings will vary, but you need to tap on your phone number under Cellular and then toggle on iCloud Private Relay.

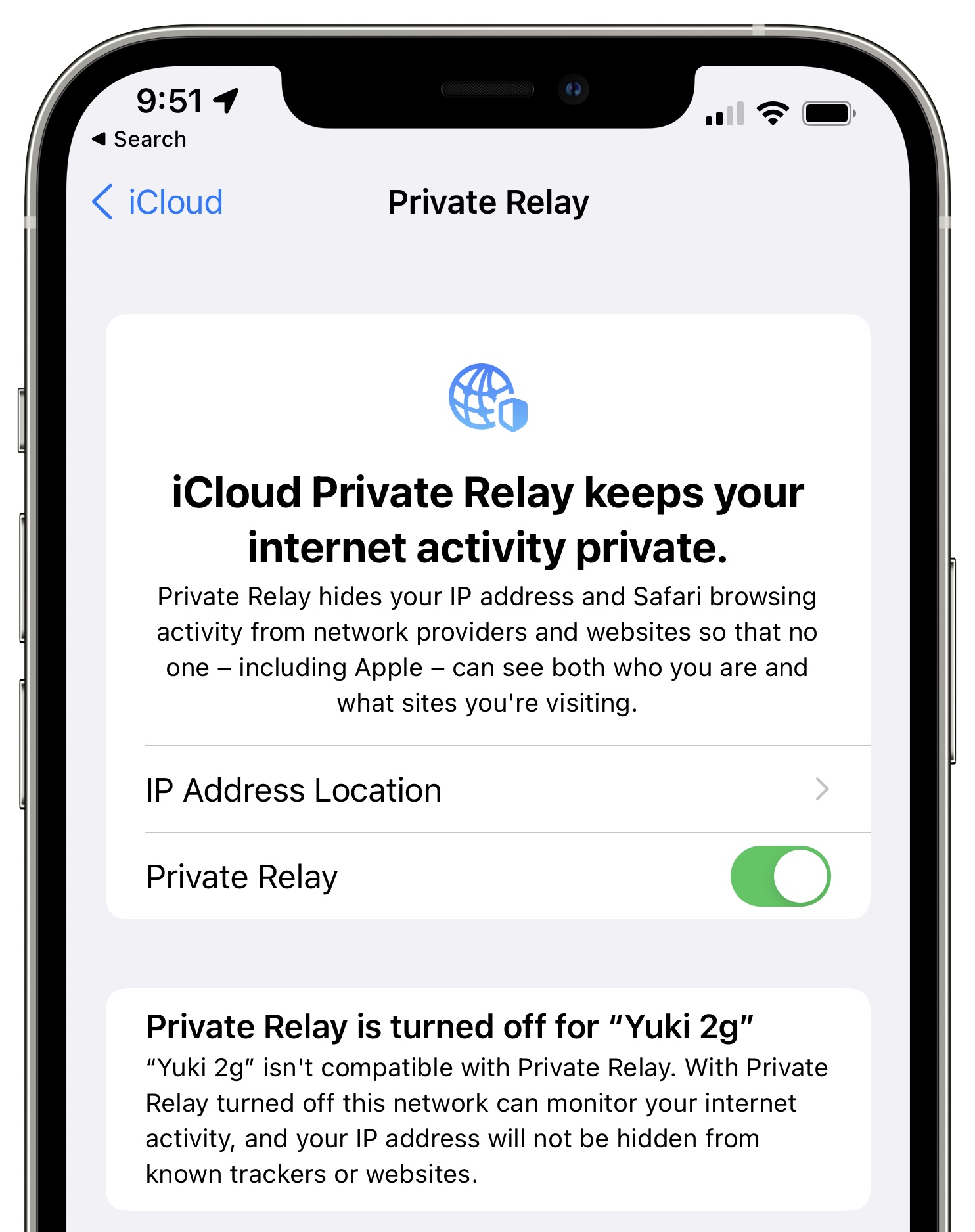

You can control iCloud Private Relay separately for Cellular and WiFi networks, leaving it enabled for one and off for the other. For WiFi, the feature prevents WiFi networks from monitoring internet activity and hides your IP address from known trackers and websites.

For cellular connectivity, iCloud Private Relay prevents cellular providers from monitoring internet activity and hides your IP from known trackers and websites. iCloud Private Relay for cellular is linked to IP address hiding options in the Mail app.

There are some situations where iCloud Private Relay may be unavailable when proxy servers are blocked. Enterprise and education networks, for example, sometimes audit all network traffic, and may block Private Relay. In this situation, you will see a note that Private Relay must be disabled so you can connect to a network, or you can choose another network.

Campuses and businesses can expressly allow proxy traffic from Apple devices for iCloud Private Relay to work, but this must be done on an opt-in basis and each individual campus or business needs to configure the network to allow for Private Relay functionality.

Hide My Email

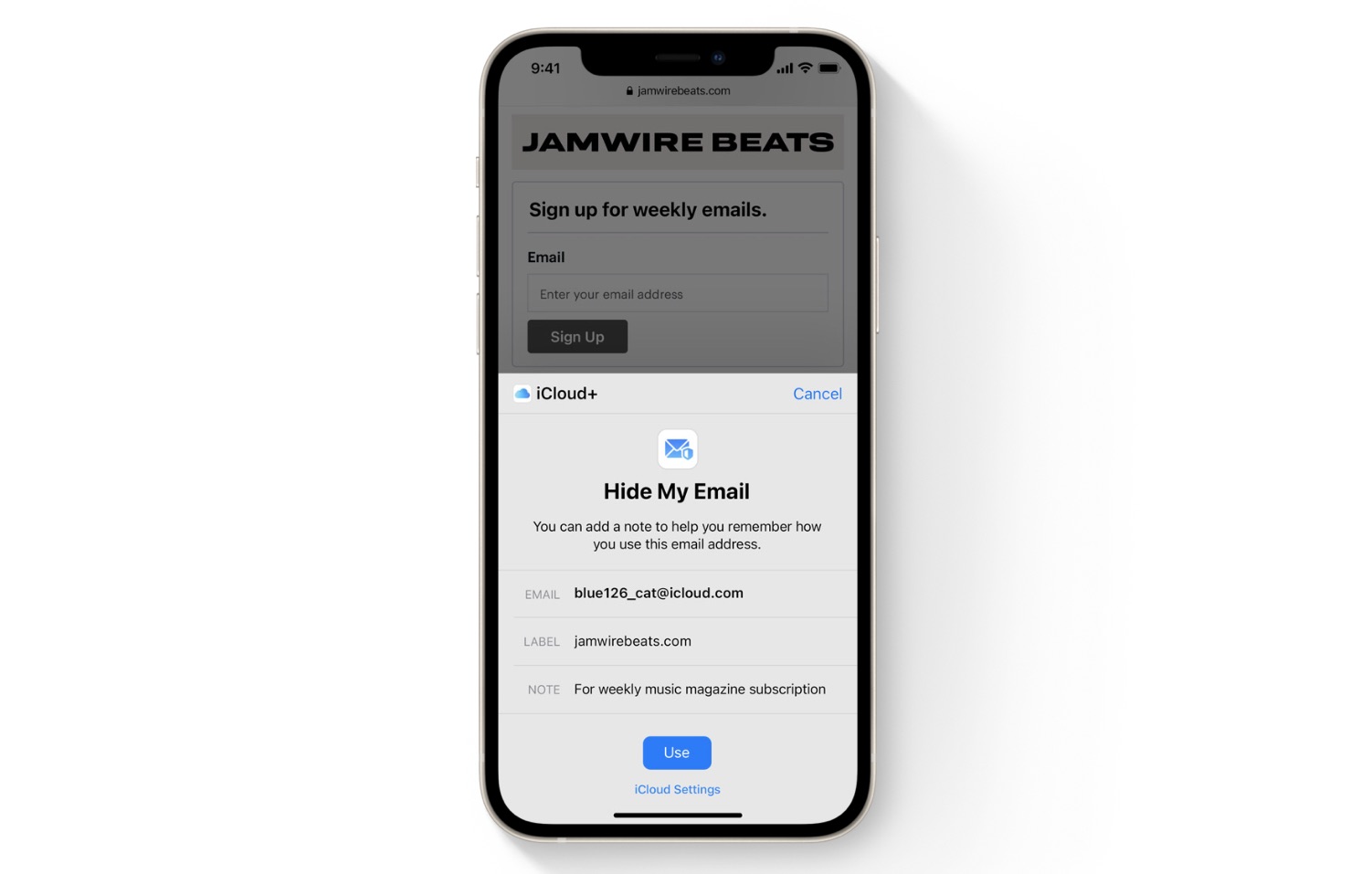

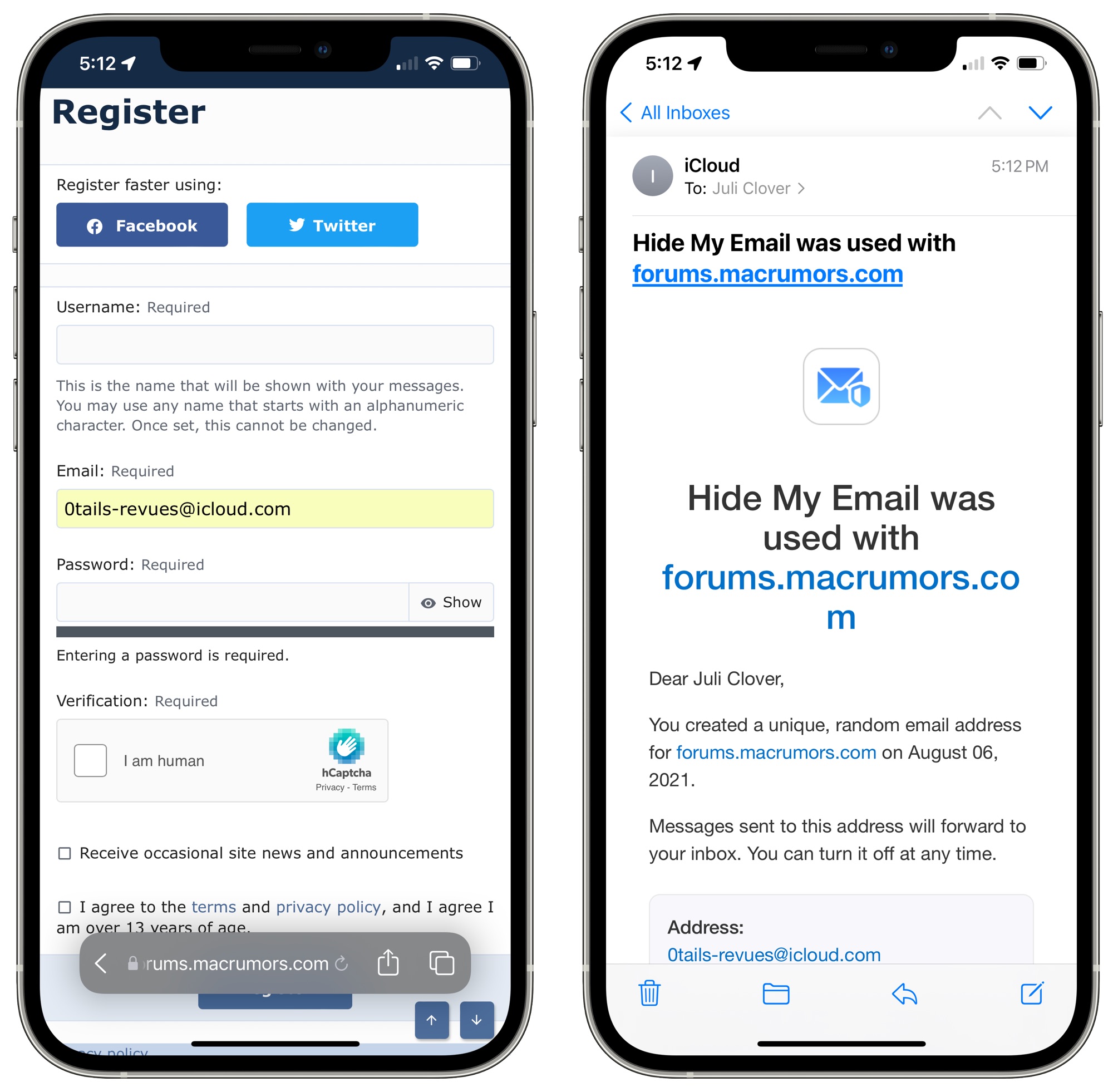

With Hide My Email, iPhone, iPad, and Mac users can create unique, random email addresses that forward to a personal inbox, so it's kind of like a password manager for email addresses. If you need to sign up for a store purchase, for example, you can use a random Apple-created email address to do so.

All the emails sent to the random Apple-created email address are forwarded to you so you can respond if needed, but the merchant does not see your real email address. And if you start getting spam emails from the merchant, you can just delete the email address and put a stop to it.

You can create separate email addresses for all kinds of things, and have a different email for every website if you want. Apple says it has no limit on the number of addresses that you can create (though during beta it is limited to 100), and they can be disabled at will to protect your privacy.

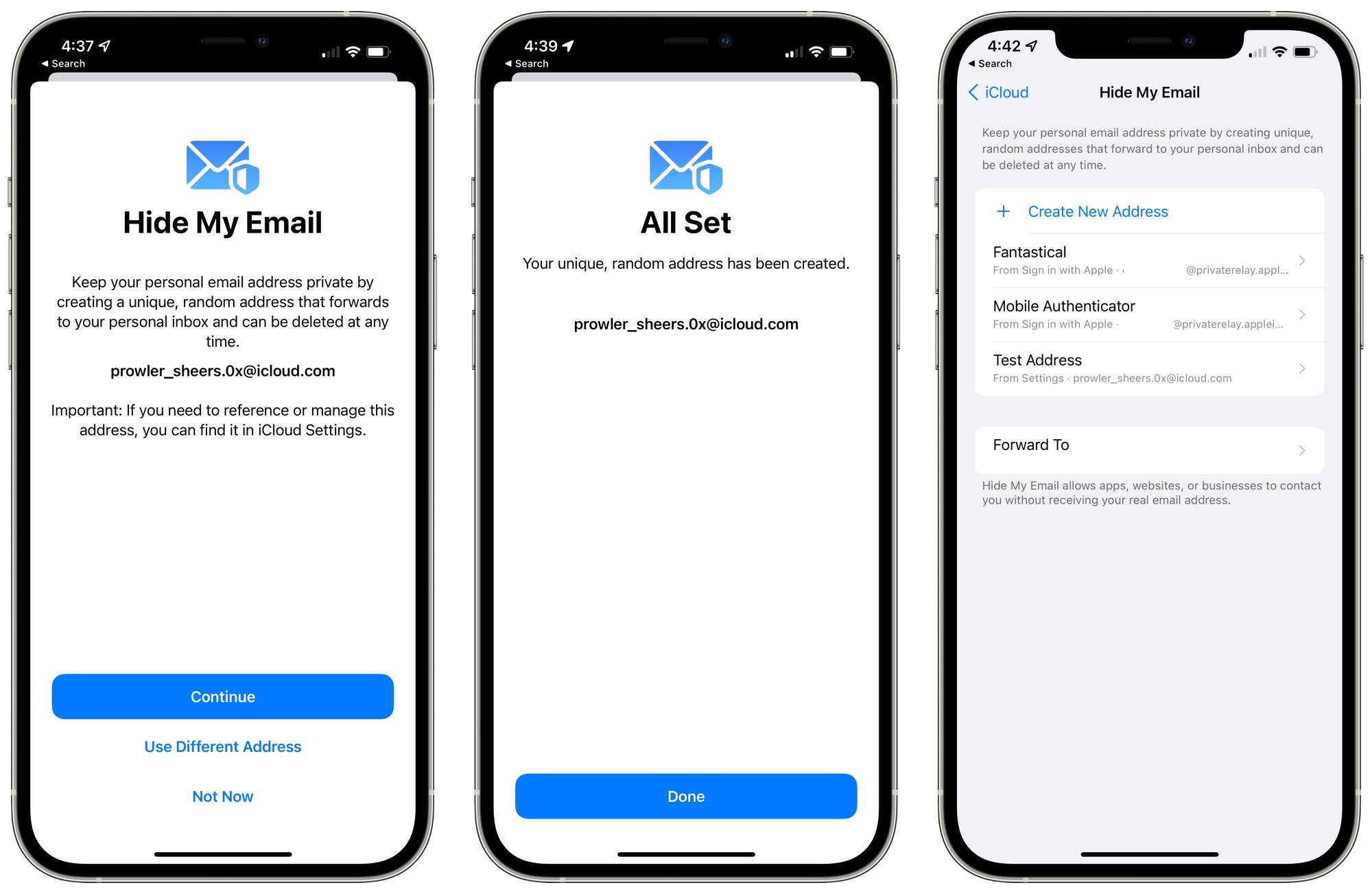

The Hide My Email feature is integrated into Safari, Mail, and iCloud Settings. If you go to Settings and then select your profile and choose the iCloud option, you'll see a "Hide My Email" section. If you tap here, you'll see all of your Sign in With Apple logins, and a "+" button.

Tapping on the "+" button lets you generate a new email address that consists of random words and numbers with an @icloud.com domain. Addresses can be labeled and you can add a note so you know what they're for, and then the generated address can be used in place of your real email address.

You can choose the email address that your Hide My Email addresses forward to. By default, it will select your Apple ID, but if you have other email addresses associated with your account (which can be done under Settings > Apple ID > Name, Phone Numbers, Email), you can select another email address option.

Hide My Email works for both receiving and sending emails. If you respond to an incoming email that was sent to a Hide My Email address in the Mail app, Apple will continue to obscure your email address in the reply. This also appears to work using other email clients, though that may not be universally true and we have not tested with all email clients.

You can also create a Hide My Email address when signing up for an account on the web using Safari, or in an app. The "Hide My Email" option will come up as a suggestion, and if you tap it, Apple will offer a randomly-generated email address for you to use, and will email you to confirm its creation.

Hide My Email is a useful and easily accessible way to create a temporary email address that protects your real email address from spam messages and lets you know exactly which companies sell your information if you start getting unsolicited emails from an address you know to be associated with only one company.

It is worth noting that it is a bit confusing to use in tandem with the built-in Passwords storage feature on the iPhone and Sign in with Apple. Email addresses that you create aren't stored in the Passwords section, you can't add a new email here, and when you create an email you then have to manually store it in Passwords or log in with it on a website to get it added to iCloud Keychain.

App Privacy Report

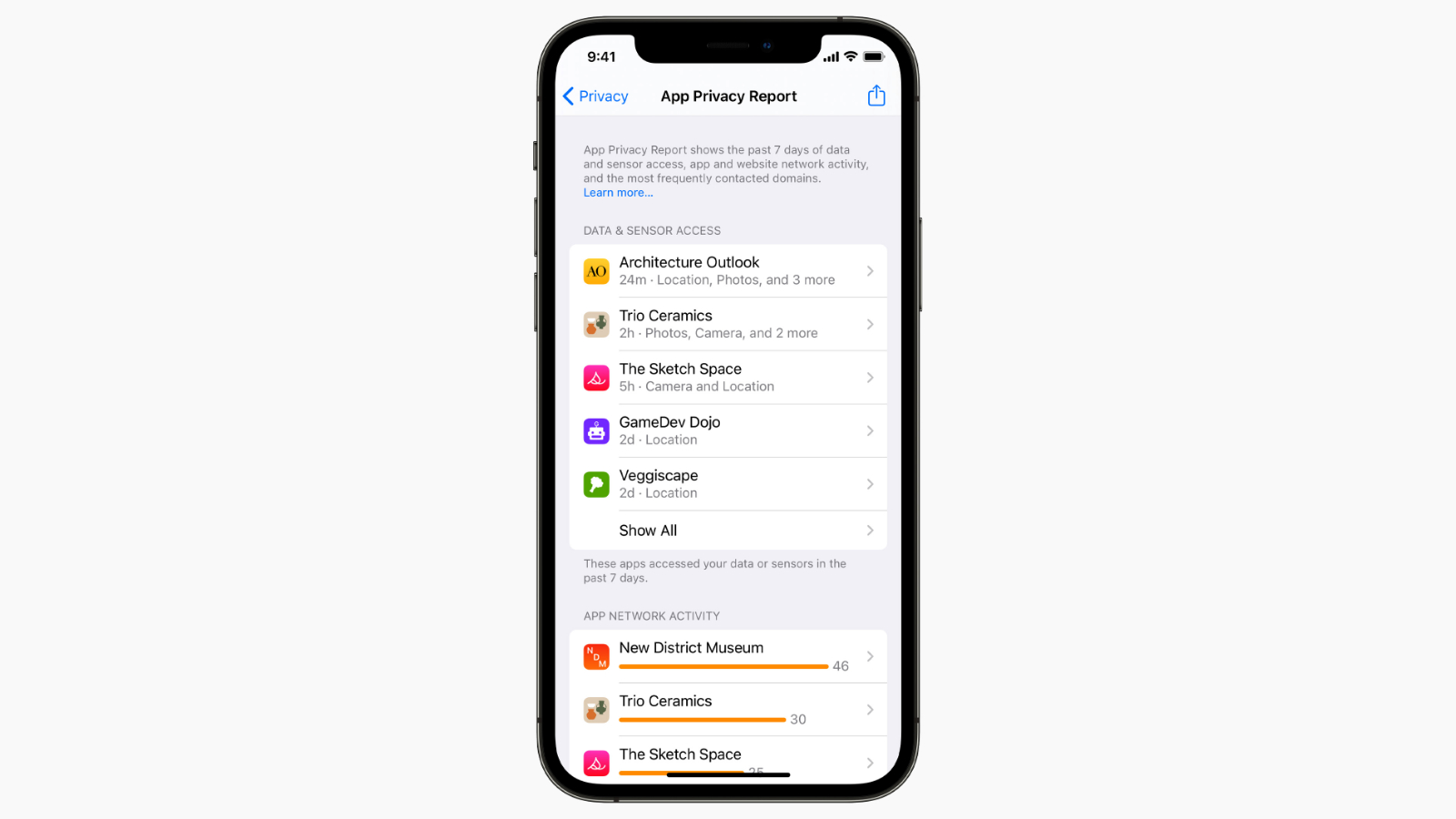

With the App Privacy Report that can be accessed in the Privacy section of the Settings app, Apple now lists which apps are using privacy permissions that have been granted to them such as the camera, microphone, and your location.

The App Privacy Report will let you know which permissions have been accessed and how long ago each app accessed that information. The App Privacy Report will also include details on which third-party domains that apps have contacted, but this feature will not be available when iOS 15 launches and will be coming later this year.

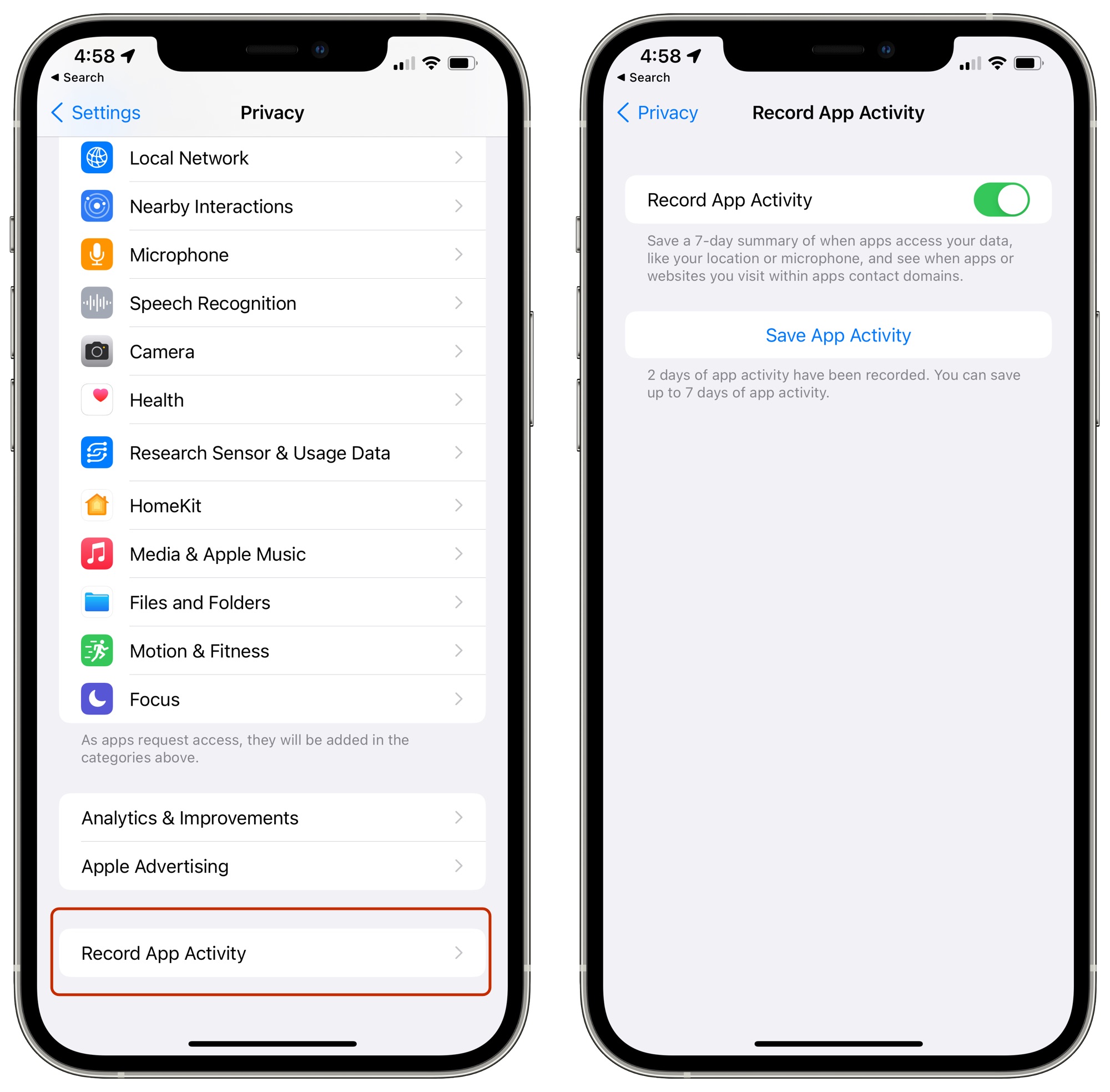

To use App Privacy Report, you need to enable "Record App Activity" in the Privacy app, which can be done by going to Settings, selecting Privacy, and tapping "Record App Activity" at the bottom of the interface to allow the iPhone to collect a 7-day summary of app activity.

Right now, you can actually download a JSON file that has app activity included, but Apple has a much easier viewing method coming.

Mail Privacy Protection

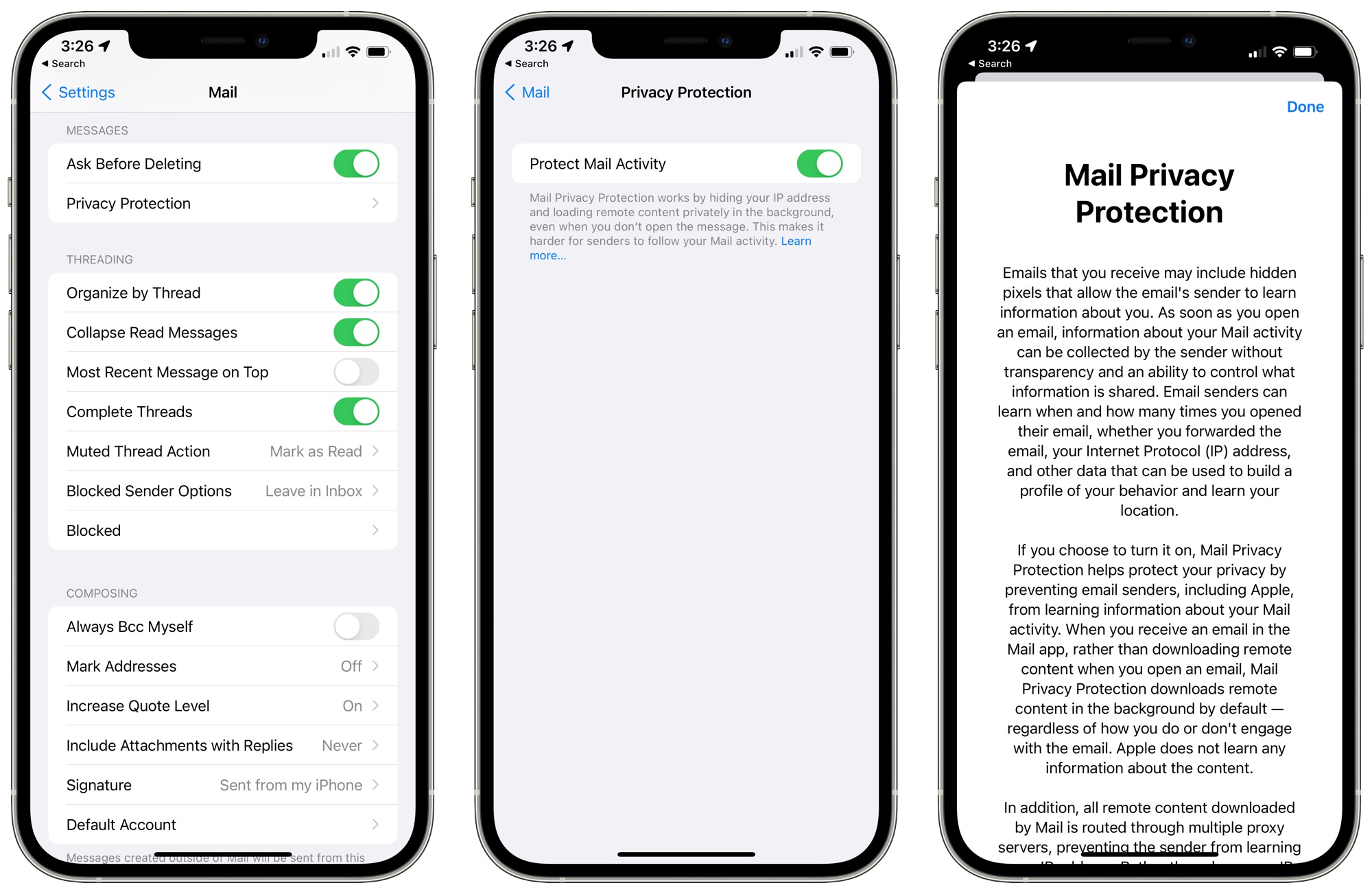

Marketing emails, newsletters, and some email clients use an invisible tracking pixel in email messages to check to see whether you've opened up an email, and in iOS 15, Apple is putting a stop to that practice with Mail Privacy Protection.

There's always been an open to block remote images, which effectively prevents tracking pixels from working, but Mail Privacy Protection is an easier to use, more universal solution. It is not on by default and needs to be enabled in the Mail section of the Settings app.

Mail Privacy Protection prevents email senders from tracking whether you opened an email, how many times you viewed an email, and whether you forwarded the email. It does not block remote images, but instead downloads all remote images in the background regardless of whether you've opened an email, essentially ruining the data.

It also has the added benefit of hiding your IP address so senders are not able to determine your location or link your email habits to your other online activity.

Apple routes all content downloaded by the Mail app through multiple proxy servers to strip your IP address, and then it assigns a random IP address that corresponds to the general region you're in. Email senders see generic information rather than specific information about you.

Mail Privacy Protection is an alternative to blocking all remote content and if the feature is enabled, it overrides the "Block All Remote Content" and the "Hide IP Address" settings.

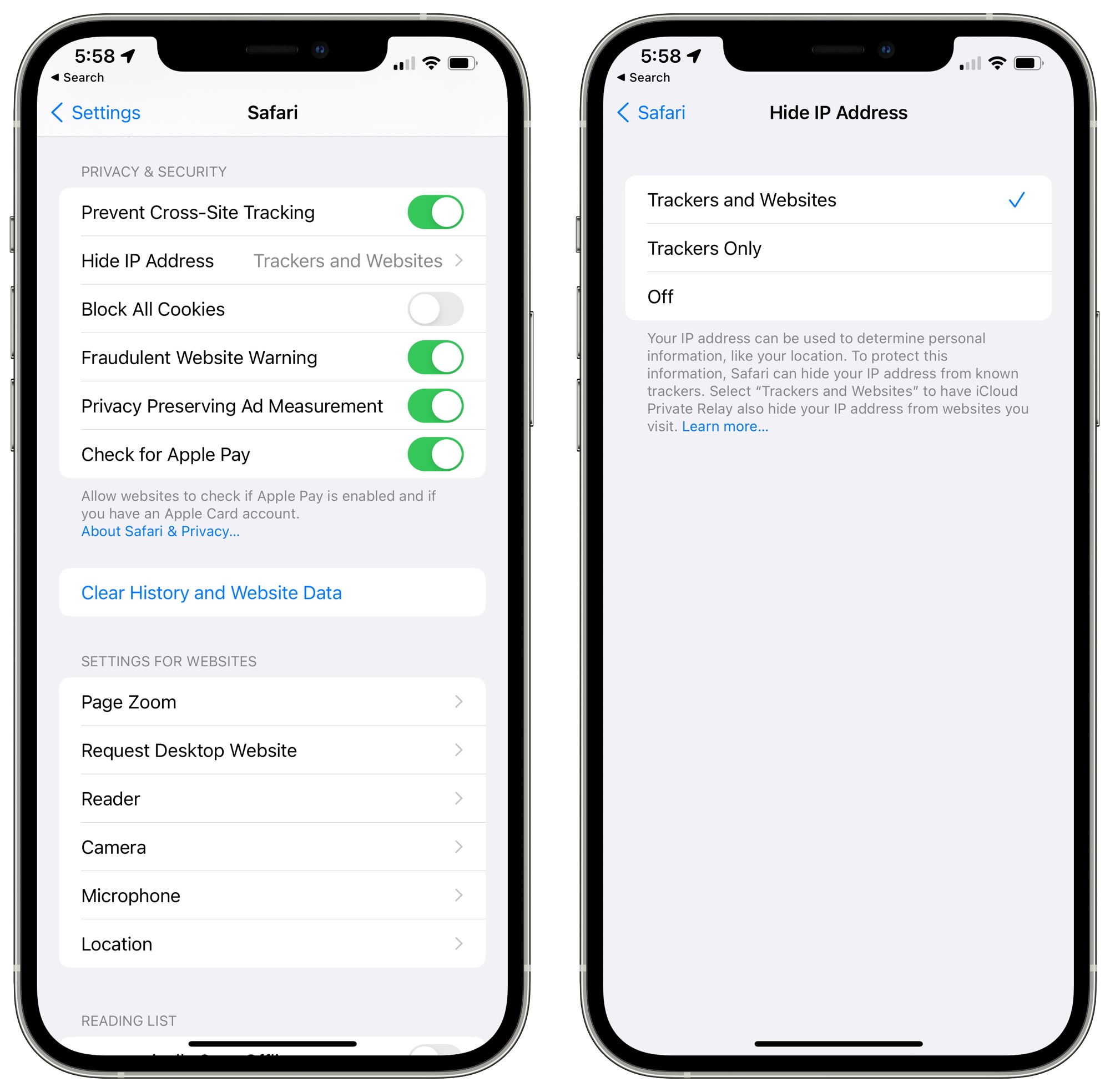

Safari IP Protection

Apple updated its Intelligent Tracking Prevention feature in Safari to keep trackers from accessing your IP address to build a profile about you. Safari is also protected with iCloud Private Relay if the feature is enabled, but you can block trackers from accessing your IP without using Private Relay.

Secure Paste

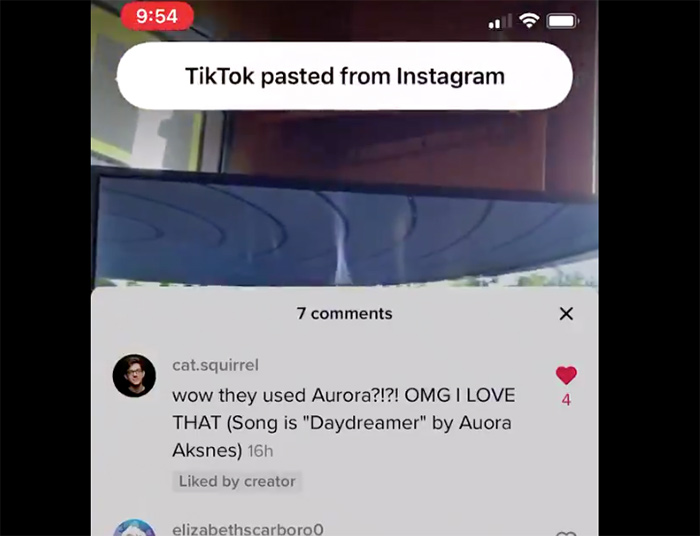

Secure Paste is a new option that developers can build into apps. With this feature enabled, if you copy something from app A and then go to use app B, app B will not be able to see what's on your clipboard until you actively paste it into app B.

Secure Paste was implemented after a copy paste privacy issue that surfaced last year. Many iPhone and iPad apps were "snooping" on clipboard data and could see anything that was copied to the pasteboard without the user being aware.

Apple in iOS 14 added a small banner that lets you know whenever an app accesses the clipboard, which prevents apps from viewing clipboard content without your knowledge, but iOS 15 takes the feature further and prevents developers from seeing the clipboard entirely until you paste something from one app to another.

With Secure Paste, when you do copy something from one app and paste it into another that has the feature enabled, you will not see the copy paste notification because the viewing of the clipboard did not happen without express user consent.

Share Current Location

If you need to share your location in an app, the Share Current Location privacy feature lets you share your location just one time instead of giving developers continual access. This option provides location sharing for a single session, and ends location access after that session is complete.

Limited Photos Library Improvements in Third-Party Apps

In iOS 14, Apple added a feature that lets you grant third-party apps access to only a few photos, preventing them from seeing your entire Photo Library. With limited access enabled, the usage experience is improving in iOS 15 as apps are now able to offer a simplified image selection workflow.

On-Device Speech Processing and Personalization for Siri

In iOS 15, if you have a device with an A12 chip or later, Siri speech processing and personalization are done on-device. Siri is faster at processing requests, but the real benefit is better security.

Most Siri audio requests are kept entirely on your iOS device and are not uploaded to Apple's servers for processing. Siri's speech recognition improves over time, as does Siri's understanding of your personal preferences.

On-device processing is available in German (Germany), English (Australia, Canada, India, UK, U.S.), Spanish (Spain, Mexico, U.S.), French (France), Japanese (Japan), Mandarin Chinese (China mainland), and Cantonese (Hong Kong).

For more on on-device processing and the new Siri features coming in iOS 15, we have a dedicated Siri guide.

Apple Card Advanced Fraud Protection

Apple Card users can opt in to Advanced Fraud Protection in iOS 15, which provides a security code that changes regularly in order to make online card number transactions more secure.

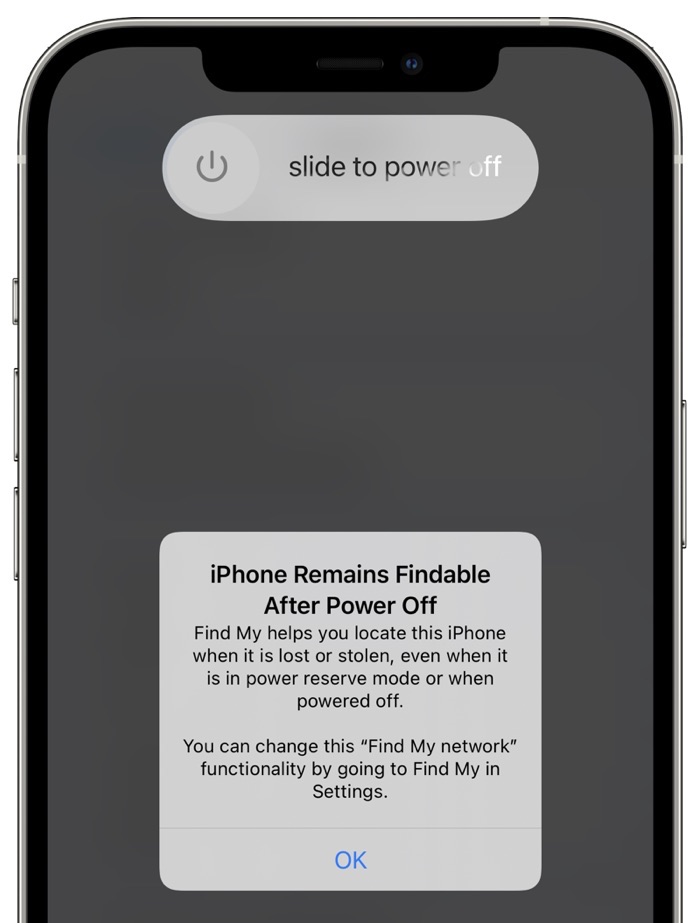

Locate an iPhone That's Powered Off or Erased

Apple in iOS 15 is making some major security-focused improvements to the Find My app, making it harder than ever for thieves to steal and fence an iPhone.

With iOS 15 installed, an iPhone that's turned off or erased is still trackable through the Find My app thanks to Apple's Find My Network, so turning an iPhone off or wiping it is no longer going to keep it from being tracked down.

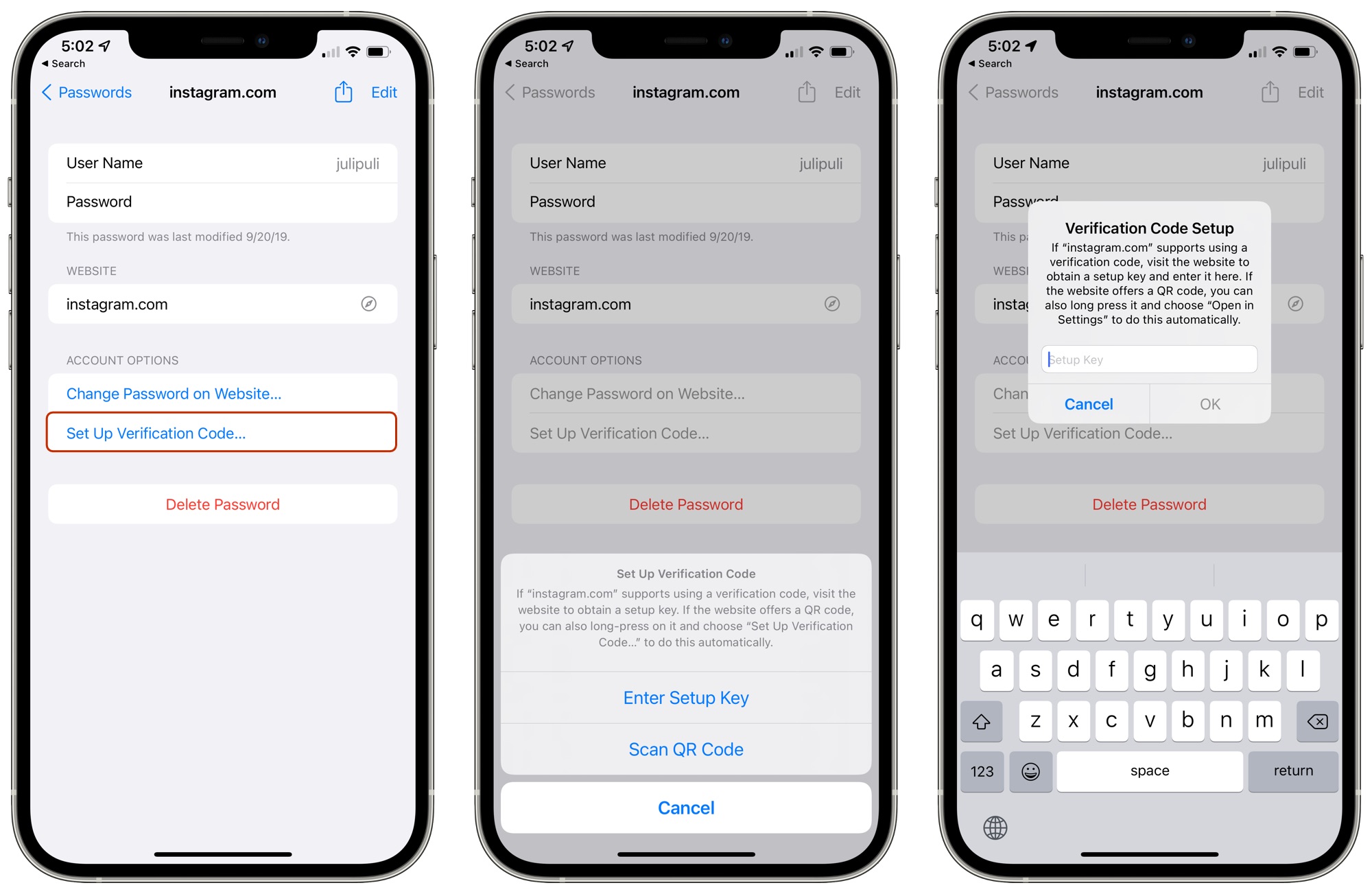

Built-In Two-Factor Authenticator

Many websites use two-factor authentication as an additional security measure alongside a password, and typically, two-factor authentication that's not based on a phone number requires a third-party app like Authy or Google Authenticator.

That's no longer the case in iOS 15 because Apple has added a Verification Code option to the Password app, so you can create and access two-factor authentication codes right on the iPhone without the need for another service.

In the Passwords section of the Settings app (which is where your iCloud Keychain passwords are stored), you can tap into any password and then select "Set Up Verification Code..." to get two-factor authentication working. The iPhone can use a setup key or scan a QR code, which is how most authentication apps work.

Once saved, you can get a code from Passwords when logging into a website, but codes will also autofill when you're logging in on an Apple device with autofill enabled.

So if you're logging into a site like Instagram, for example, iCloud Keychain autofills your username, your password, and can also autofill the two-factor authentication code so your login is secure, but also more convenient.

Child Safety Protections and Privacy Concerns

Apple in iOS 15, iPadOS 15, and macOS Monterey is adding several tools that are aimed at protecting children from sensitive photos and cutting down on the spread of Child Sexual Abuse Material (CSAM). All of these features are launching in the United States first, and will involve scanning photos before images are uploaded to iCloud Photos and the messages of children if implemented by parents, with all scanning done on-device.

Security researchers, concerned users, the Electronic Frontier Foundation, and others have criticized Apple's plans to analyze content because of the possible future implications of such a system.

The general sentiment is that if Apple can scan for child abuse now, the system could be adapted for other purposes in the future. Apple can "scan for anything tomorrow," wrote Edward Snowdon, who called Apple's plan "mass surveillance."

The EFF referred to Apple's Messages technology as a "proposed backdoor" and said that it "breaks key promises of the messenger's encryption" and "opens the door to broader abuses" because Apple could expand machine learning parameters to look for additional types of content. "That's not a slippery slope; that's a fully built system just waiting for external pressure to make the slightest change," wrote the EFF.

The technologies and new features Apple is implementing are explained in more depth below.

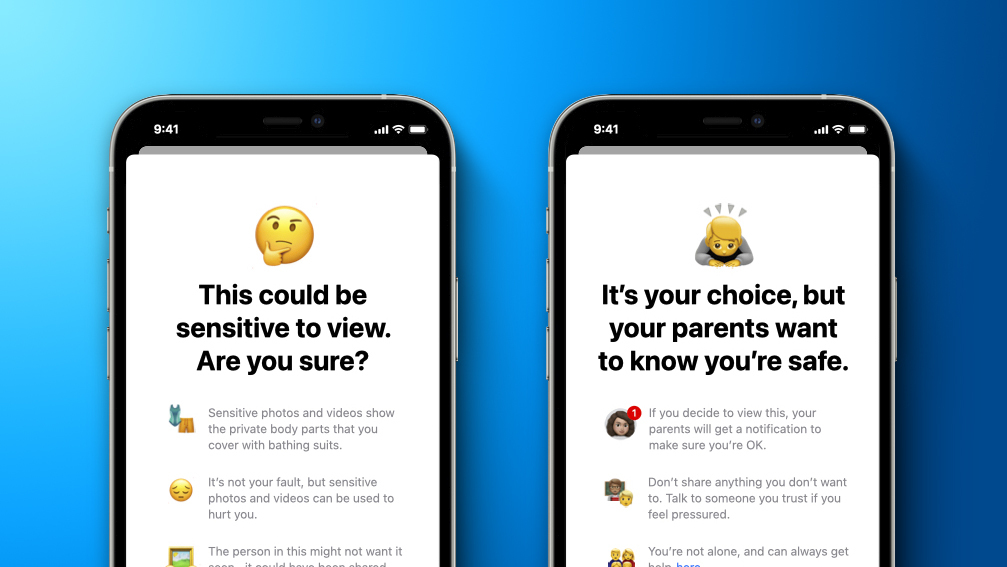

Communication Safety for Kids

For child accounts that have Family Sharing enabled, parents can turn on a feature that will use on-device machine learning to scan photos and warn parents if their kids are viewing sensitive content. This "Communication Safety" option is limited to accounts used by children that are under the age of 18.

If an Apple device linked to a child's Apple ID detects a sexually explicit photo, it will be blurred and the child will be warned in simple language against viewing it. If the child proceeds to view the content anyway, parents can opt to get a notification, with the aim of keeping the child safe from predators. Parental notifications are limited to accounts for children that are under 13.

This Messages scanning feature does not work for adult accounts and can't be implemented outside of Family Sharing, and Apple says that communications continue to be private and unreadable by Apple. Apple is implementing this feature to give parents the tools to protect their children in online communications.

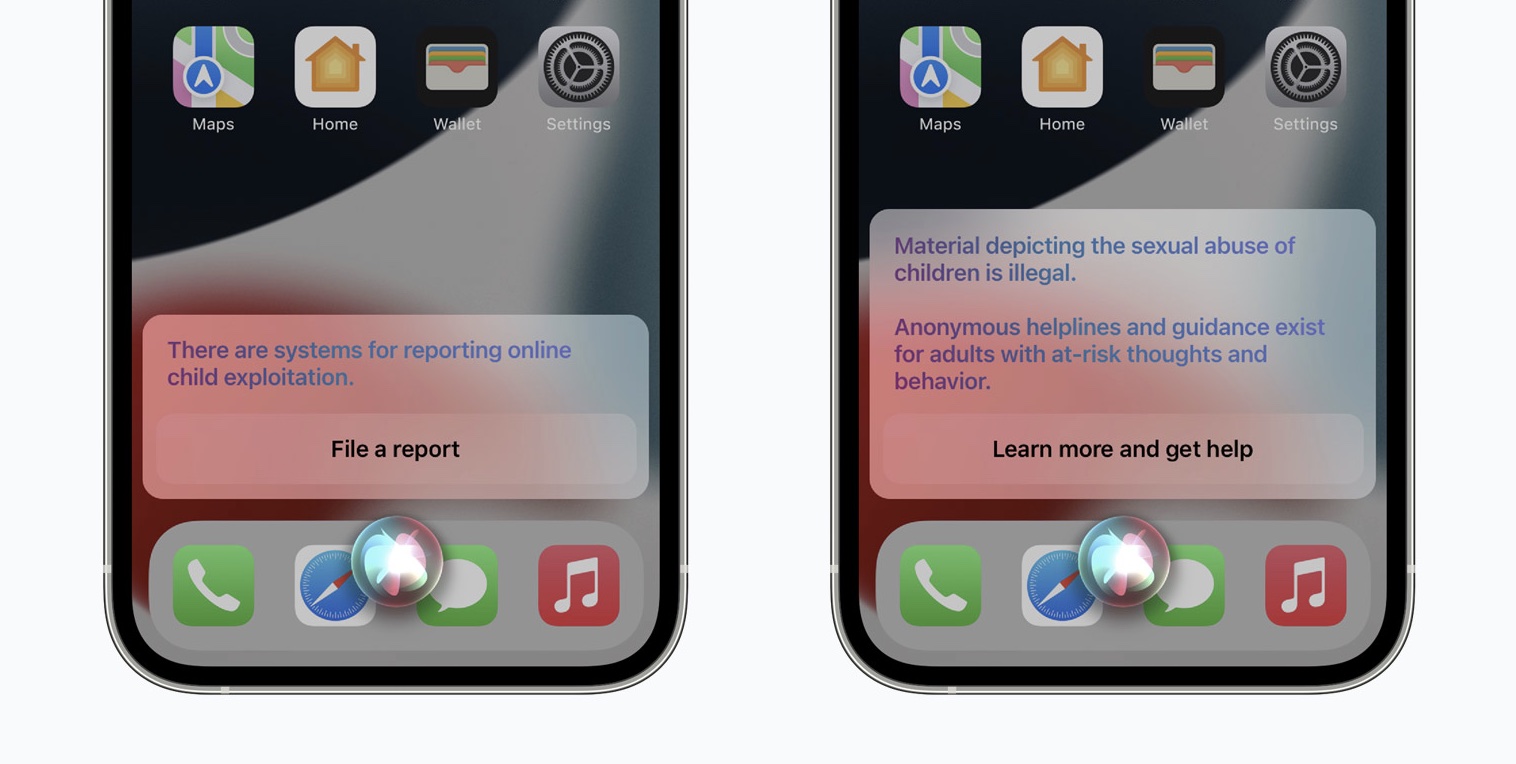

Siri and Search Restrictions

If users attempt to search for Child Sexual Abuse Materials (CSAM) topics using Siri or the built-in Search tools on Apple devices, Siri and Search will intervene and prevent the search from taking place.

Siri and Search will also provide parents and children with "expanded information and help" if they encounter unsafe situations while using the built-in search tools.

Limiting CSAM Spread

Apple in iOS 15 and iPadOS 15 will scan a user's photos to look for known Child Sexual Abuse Materials, with plans to report findings to the National Center for Missing and Exploited Children (NCMEC). iPhones and iPads will download an unreadable database of hashes that are linked to known CSAM images, comparing this database to the photos on a person's device. Apple's hashing technology, NeuralHash, analyzes an image and converts it into a unique number specific to that image.

Apple's on-device matching process happens before an image is stored in iCloud Photos. If a photo on a user's device matches with a known CSAM hash, the device creates a cryptographic safety voucher, which is uploaded to iCloud Photos along with the image.

When a certain threshold of matches is exceeded, Apple can interpret the contents of the vouchers for CSAM matches. Apple does a manual review of each report to confirm the match, then the user's iCloud account is disabled and a report is sent to NCMEC. Apple says that this process has an "extremely high level of accuracy" with an error rate of "less than one in one trillion accounts per year" to ensure that accounts are not incorrectly flagged.

Apple is not scanning a user's personal photos for content and is instead looking for photo hashes that match specific, already known CSAM images. While scanning is done on-device, the flagging is not done until an image is stored in iCloud Photos.

Apple says that its NeuralHash method is an effective way to check for CSAM in iCloud Photos while protecting user privacy. It is "significantly more privacy preserving" than cloud-based scanning methods, according to Apple, because it only reports users who have a collection of known CSAM stored in iCloud Photos. Apple does not see photos that have not been uploaded to iCloud Photos, so disabling iCloud Photos effectively turns off the feature.

Guide Feedback

Have questions about the privacy and security features in iOS 15, know of a feature we left out, or want to offer feedback on this guide? Send us an email here.

Note: Due to the political or social nature of the discussion regarding this topic, the discussion thread is located in our Political News forum. All forum members and site visitors are welcome to read and follow the thread, but posting is limited to forum members with at least 100 posts.

Article Link: iOS 15 Privacy Guide: Private Relay, Hide My Email, Mail Privacy Protection, App Reports and More

- Article Link

- https://www.macrumors.com/guide/ios-15-privacy/

Last edited: