Subject: Mixing DIMM sizes in the MP7,1

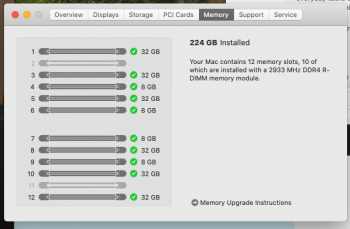

My current approach for setting up 288 GB RAM is to add 8x 32GB R-DIMMs to my stock 4x 8GB R-DIMMs.

After reading the article here -> https://macperformanceguide.com/MacPro2019-MemoryBandwidth.html I'm a bit concerned my approach is not good wrt memory performance.

Our office performs two major tasks:

My concern is with the 2nd CFD task.

My current approach for setting up 288 GB RAM is to add 8x 32GB R-DIMMs to my stock 4x 8GB R-DIMMs.

After reading the article here -> https://macperformanceguide.com/MacPro2019-MemoryBandwidth.html I'm a bit concerned my approach is not good wrt memory performance.

Our office performs two major tasks:

- Film editing using primarily Adobe software (but will be exploring the use of FCPX as it appears to offer extreme benefits with MP7,1).

- Fluid Dynamics simulations (CFD) - Multi-threaded - Intensive CPU/Processor, core, memory, and at times i/o intensive.

My concern is with the 2nd CFD task.