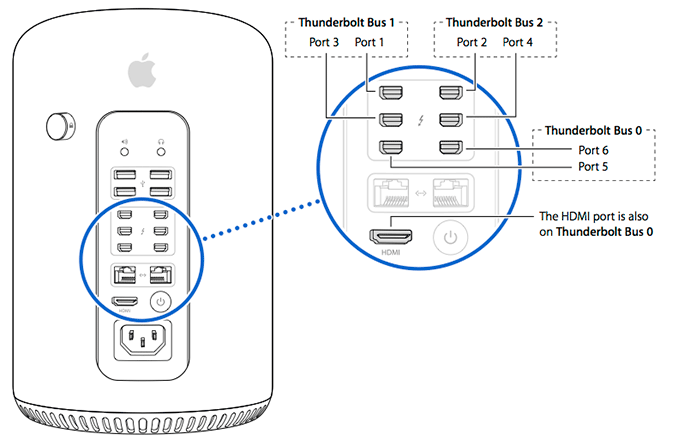

Apple states that monitors should ideally be on separate TB busses - 1,2 or 3.

What Apple fails to do is illustrate how the GPU cards are hooked up. Given TB and HDMI groupings, it seems as those they have hooked the 1 GPU to TB bus 1 and 2 and the other on TB bus 0 ( so can drive HDMI.... what HDMI has to do with Thunderbolt I have no clue. TB isn't necessary at all to be in the loop. ). It think there "TB bus" terminology might be a bundle grouping of PCI-e and DisplayPort inputs to the TB controllers. [ and the part of the DisplayPort bundle to bus 0 is peeled off and passed through a HDMI converter before it ever gets to the TB controller. ]

A 27 Cinema or TB 1.0 Each bus is 5 GB/ps, but I forget the bandwidth needed for the 27" monitor. Well, according to TB 1.0 it's half the bus, so that must mean 2.5GB/s, leaving you with another 2.5GB/s for data I/O.

DisplayPort , DVI , and HDMI monitors should take zero Thunderbolt bandwidth on a TB port or controller. if want to shift to 4K or use mainstream display products avoiding TB "displays" has TB network bandwidth consumption benefits. If need a display docking station to expand the number of ports, fine, but if not can save TB bandwidth for other kinds of TB devices.

So if want to conserve TB bandwidth so it is availble for non display TB devices it is much better to direct attach the DisplayPort/HDMI/etc devices directly to the Mac Pro than to push them downstream. With 6 ports there is no good reason to do that.

Which TB port is demand probably more so on the computational demand the workload is throwing at the 2nd GPU. If it is largely unloaded with display workload ( e.g., Displays on ports 5, 6, and/or HMDI ) then I would collect non-display TB devices on the "video" TB ports driven by that GPU ( I think that may be ports 1-4 or at least 1-2 ).

Some older apps may work better if both screens of the app are handled by the same GPU ( older version of FCPX had this limitation. Not sure if the new one also still has this issue. That wouldn't be surprising as Apple may want to still force a segregation of GPU and OpenCL workload. )

If going to be using both GPUs than it makes more sense to more evenly spread out the GPU load. (e.g., go vertical pattern in attaching Display. For instance the 'column' of ports that would include the HDMI port and starting from the bottom of the column. )

Those are (PCIe 2.0) directly connected to the CPU, not bad.

They are not directly connected to the CPU. If the plex switch can uplift the PCI-e 2.0 traffic in parrallel to PCI-e v3.0 that it is not bad. If not it is what have to live with.

USB 3.0 has only a single PCIe lane for it. So your better off using TB disks.

Depends in part to what already have. If have, and have been using, USB 3.0 it doesn't make sense to dump them.

There is a performance/cost trade-off for the disks. The overwhelming vast majority of USB 3.0 sockets deployed pragmatically have exactly the same single PCI-e lane (or equivalent ) bandwidth behind it as long as dealing with a single USB controller.

----------

Apple states that monitors should ideally be on separate TB busses - 1,2 or 3.

Monitors or their Display docking stations ? Docking stations with a DisplayPort sink attached to them also are high TB bandwidth consumers. Those should be spread out. Otherwise, your are dropping multiple hogs onto one bus.

Apple's example of 6 TB display docking station is a highly goofy configuration. It will distribution the GPU load over all of the GPUs and then suck up the vast majority of TB bandwidth with video traffic. You'd have a hugmungous number of Ethernet , Firewire , and USB ports but quite limited external bandwith to storage ( unless somehow 'glue' all of those docking station ports back together).