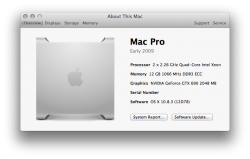

I have a MacPro4,1 (firmware updated to MacPro5,1) 2 x 2.26 GHz Quad Core (8-core).

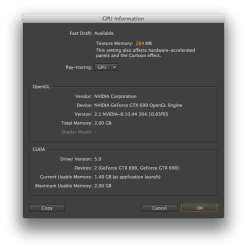

I'm looking to upgrade my graphics power and one setup I've been considering is running a GeForce GT 120 as my GUI in Slot-2 (current video card) and adding a GTX 690 into Slot-1.

Rob at Bare Feats ran a few After Effects benchmarks with the GTX 690 that smoked both the GTX 580c and GTX 680 Mac. See times below:

After Effects CS6 - Ray-Traced 3D (minutes)

Rob also mentioned that with a 6-pin to 8-pin adaptor he was able to run the GTX 690 (EVGA) and "banged on it hard and never had any issues . . . [and] was also very quiet." The only drawback he mentioned was that the GTX 690 only ran at PCIe 1.0 instead of PCIe 2.0. Nonetheless, it still managed to outperform the other cards in his After Effects benchmarking.

With the GeForce GT 120 as my GUI I'll still have my boot screens while simultaneously leveraging the GPU power of the GTX 690 for my After Effects use.

Thoughts on this setup? Viable?

Thanks!!

I'm looking to upgrade my graphics power and one setup I've been considering is running a GeForce GT 120 as my GUI in Slot-2 (current video card) and adding a GTX 690 into Slot-1.

Rob at Bare Feats ran a few After Effects benchmarks with the GTX 690 that smoked both the GTX 580c and GTX 680 Mac. See times below:

After Effects CS6 - Ray-Traced 3D (minutes)

- GTX 690: 10.7

- GTX 580c: 12.4

- GTX 680c Mac: 14.3

- GTX 680 Mac: 15.3

- GTX 570: 15.9

Rob also mentioned that with a 6-pin to 8-pin adaptor he was able to run the GTX 690 (EVGA) and "banged on it hard and never had any issues . . . [and] was also very quiet." The only drawback he mentioned was that the GTX 690 only ran at PCIe 1.0 instead of PCIe 2.0. Nonetheless, it still managed to outperform the other cards in his After Effects benchmarking.

With the GeForce GT 120 as my GUI I'll still have my boot screens while simultaneously leveraging the GPU power of the GTX 690 for my After Effects use.

Thoughts on this setup? Viable?

Thanks!!