I recently received the new NVIDIA 6800 Ultra DDL. While it drastically increased the frame rates on games over my ATI 9600 Pro, professional applications seemed to show very little difference (such as Cinema 4D and SpecViewPerf 7.1 and 8). The NVIDIA card blows away the 9600 in raw capability, so something is awry. Professional apps on the PC show much more improvement. Professional apps tend to manipulate very high polygon models and scenes. To get some insight, I ran the OpenMark benchmark which tests raw OpenGL triangle throughput on shaded, lit and textured geometry.

dmglover was nice enough to provide me with his test results on both the Mac and PC using the 6800 ultra on both systems.

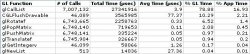

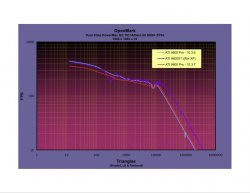

The results are below. While the Mac is nearly twice as fast (amazing) at very low triangle counts, the PC soon catches up and at 10,000 triangles it is twice as fast and soon is 3x as fast as you keep increasing triangle count. Where the curve is linear, the FPS no longer depends on scene resolution and is completely platform dependent. A 2.5Ghz G5 would have that curve shift to the right - closer to the PC results.

I am interested in both Mac and PC results so I can send to Apple. I would like the 2.5G5 with the ultra, as well as a 9800XT on a 2.5 G5. Athlon64/Opteron results are also needed.

OpenMark: (http://www.giofx.net/osx/) (http://www.giofx.net/win/misc/OpenMark.zip )

Thanks for any info!

dmglover was nice enough to provide me with his test results on both the Mac and PC using the 6800 ultra on both systems.

The results are below. While the Mac is nearly twice as fast (amazing) at very low triangle counts, the PC soon catches up and at 10,000 triangles it is twice as fast and soon is 3x as fast as you keep increasing triangle count. Where the curve is linear, the FPS no longer depends on scene resolution and is completely platform dependent. A 2.5Ghz G5 would have that curve shift to the right - closer to the PC results.

I am interested in both Mac and PC results so I can send to Apple. I would like the 2.5G5 with the ultra, as well as a 9800XT on a 2.5 G5. Athlon64/Opteron results are also needed.

OpenMark: (http://www.giofx.net/osx/) (http://www.giofx.net/win/misc/OpenMark.zip )

Thanks for any info!