Ever wanted to know whether you can know not only the precise GPS coordinates of something you've shot a video of during travel, but also the direction (based on the internal compass) of the camera while the object was taken a video of? Every wanted to know how this can be done on an iPhone (or any 3G iPad) or, if you have a non-GPS/compass-equipped external camera, with the help of your iDevice? Read on this article is for you!

In the first chapter of the article, I explain why you can't do this with Apple's stock Camera app or for that matter, when compass recording is concerned, any decent(!) standalone camera out there.

In the second one, I show you two third-party apps for iOS that (are supposed to) do the trick. Should you only need an app for shooting with dynamic location / direction information, you can safely skip all the other chapters.

In the third chapter (Shooting with an external camera), I explain how iPhones (and 3G iPads) can be used as a GPS & compass tracker to be used together with an external camera. After listing and evaluating several GPS (but not compass!) trackers readily available in the AppStore, I present mine, along with source code(!), that does this including even compass tracking. If you in no way want to shoot with your iPhone for the reasons outlined in this chapter (narrow field-of-view or mono audio, for example) but would still use it as an excellent tracker, this chapter is for you.

Note that THIS article also explains the advantages of dynamic location / direction storing - you might want to read it before going on, should you not see the point in all this, based on my introduction above.

1. The problem

1.1 The problems with dedicated cameras

I've frequently posted information on cameras capable of storing GPS coordinates along with still shots (and videos). One of my 14-month-old list is in my well-known still photo geotagging tutorial.

Unfortunately, the situation hasn't changed much in the last 14 months. Still very few cameras have built-in GPS, let alone compass. Video support is even worse: the only cameras with continuous(!) GPS data recording are pretty restricted when it comes to overall image quality, with particular emphasis on low-light performance. Unfortunately, IQ-wise the best compact cameras with GPS like the Canon S100, aren't able to record continuous GPS data at all (as they aren't using AVCHD but restricted (see Section 1.2.1 below) simple MOV files). The GPS-equipped AVCHD models, already capable of dynamic location logging, in the Panasonic TZ / ZS series (for example, the ZS20) can't boast very good image quality. Finally, Sony's HX-series P&S cameras (the only ones that do have compasses in addition to GPS'es, both dynamically logged along videos), being compact superzooms, aren't really meant for enthusiasts, not even in their most recent reincarnations as their lens are pretty slow and sensors tiny (as with all compact superzooms, as opposed to true high-quality small enthusiast cameras like the Sony RX100, the Fujifilm XF1, the Panasonic LX series etc. - see THIS for more examples of these cameras).

Needless to say, the king of sports cameras, the GoPro series, don't even contain a GPS unit (let alone a compass), not even in their third reincarnation. The only dedicated sports camera model with GPS, the Contour+2, doesn't have compass either and is, generally, much less recommended than the GoPro series, particularly if you need easy and safe chest / head mountability particularly important to, say, skiers like me.

1.2 The problems with Apple's approach: a single GPS location + no compass info

iPhones starting with the 3GS, iPod touches with the 4th generation and all iPads all have compasses, in addition to all video recording-capable iPhones' and all 3G-enabled iPads having GPS. Unfortunately, these are not utilized when shooting videos. This, unless you either use third-party apps (like that of Ubipix, see below), means you'll never know where the iPhone was when a particular frame of a video was shot and to which direction it was pointed.

The inability to save GPS / compass information of iOS is because of several reasons. In the following subsections, I explain them all. Again, this info doesn't truly needed by people that only want to know what AppStore app to select to be able to dynamically geotag their videos. They can safely skip the remainder of this chapter and move straight to chapter 2.

1.2.1 Video container restrictions

I've very often explained why the MOV / MP4 / M4V container Apple only supports is inferior to more advanced ones like MKV or AVCHD. Then, I haven't mentioned AVCHD also supports variable, continuously changing location (and compass) info storing with every single(!) frame.

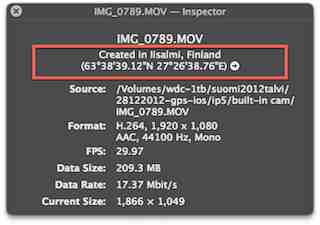

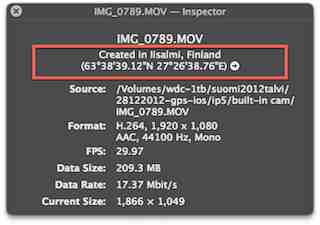

MOV's recorded by iDevices, unfortunately, only support one, global location tag. It's this value that QuickTime shows like in the following dialog (via Cmd + I):

(As with all the other images in the article, click for a much better-quality and larger one.)

It's worth pointing out that the, otherwise, great and highly recommended MediaInfo doesn't display this info:

Incidentally, there is something interesting here: the tagging time (11:11:53) is 12 seconds later than the recording/encoding date (11:11:41). This is because location data is not guaranteed to be present when starting recording the video it can be stored in the target MOV file when it becomes available. This also means the coordinates you find in a video shot on an iDevice aren't necessarily that of the starting point but may be several (in this particular case, 12) seconds into the video.

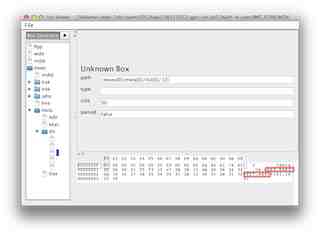

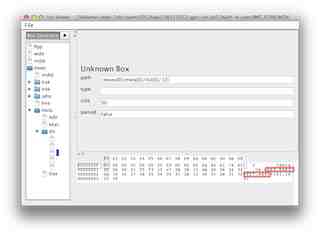

Physically, the GPS coordinates are at /moov[0]/meta[0]/ilst[0]/[2] as can be seen in the following screenshot shot with IsoViewer:

Not being associated with any particular frame, this also means there can be only one instance of this data for the entire video stream.

Programmatically setting the metadata property of AVCaptureMovieFileOutput (see section Adding Metadata to a File in the official AV Foundation Programming Guide) doesn't help either as it sets exactly this list element and not that of the currently recoded frame. That is, creating your own video recorder and continuously setting the metadata with the then-current GPS coordinates won't work. The MOV container (and Apple's limited API to manipulate it) simply doesn't support this.

1.2.2 What about the video framegrabs of the iPhone 5?

Unfortunately, not even the shots (technically, as opposed to more decent cameras like the GoPro 3 Black Edition, the Nikon System 1 cameras or newer Sony models delivering full-resolution still images, vastly inferior simple framegrabs) made by the iPhone 5 have dynamic location info. (They could as each of them does have a fully independent location tag.) That is, if you travel with a car while continuously shooting a video and, without stopping video recording, tap the still snapshot icon on your iPhone 5's screen, the 1920*1080 still shot stored will have the exact same location tag as that of the video that is, the location it was tagged. That is, these shots will not have the actual location of taking the shot itself.

2. Third-party solutions readily available on iOS

2.1 Ubipix

Without doubt the best application to record dynamically geotagged videos even with compass info is the free Ubipix (AppStore link).

Basically, when you record a video from inside the app, it'll also record all the location and camera direction info along with the video recording. These recordings are stored in the /Documents/defaultFolder folder of the app. Recording one video will result in three video files to be created: the video recording itself (say, 1356684671.mov), a textual file (.txt) containing all the location and compass info and, finally, a PNG framegrab of the video of the same resolution as the video itself. The video file isn't encrypted, which means you can edit it before uploading (a probably important thing, should you want to remove the audio track before making the video public). You can even shoot video in another, much more capable app allowing for a lot more features (torch, snapshots on the iPhone 5, separate exposure / focus carets, a lot of additional settings on jailbroken devices in the stock Camera app via CameraTweak etc.) of the current iPhone model (say, the built-in Camera app or third-party ones like FiLMiC Pro) and, then, manually transfer the video to this Ubipix folder for later upload. (To create a compatible .TXT file with all the location / compass info in Ubipix's own format, you'll need to modify my location logger discussed in Section 3.2.) Also, you can shoot a video with an external camera and, after converting (with AVCHD videos, just remuxing via iVI) its video to a MOV container (if it isn't already shot using that one), do the same before upload.

After finishing shooting (which, again, may involve a conversion with an external tool or even transferring videos from another camera), all you need to do log in to your free Ubipix account in the Settings dialog of the iOS client (annotated by a red rectangle below):

(an iPhone 5 shot; as you can see, the app doesn't make use of the full screen estate of the newer, 16:9 devices)

and, then, selecting the video to upload and tapping the upload icon in the upper right corner. If you want to keep the video private, you'll need to tap the Public icon (the third from the top) before uploading so that it becomes Private.

These two icons are both annotated in the following screenshot:

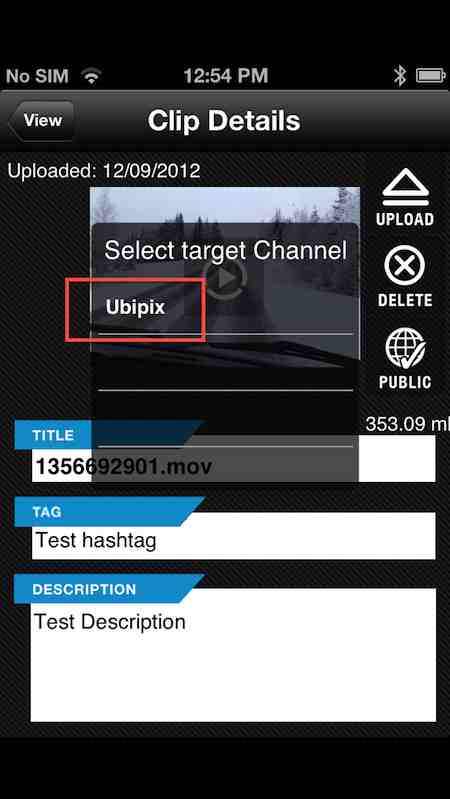

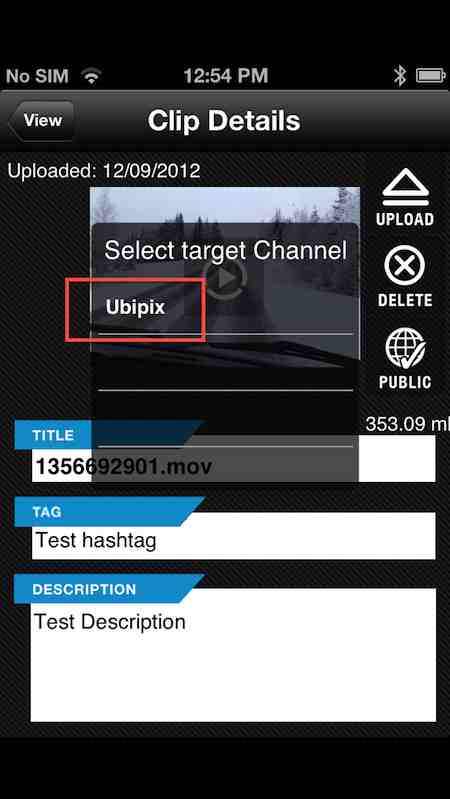

After tapping Upload, you'll need to select a target channel; currently, only one is available (Ubipix):

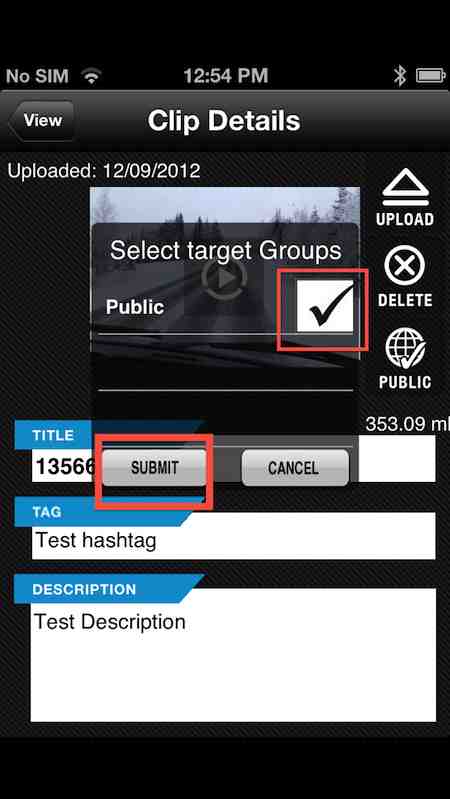

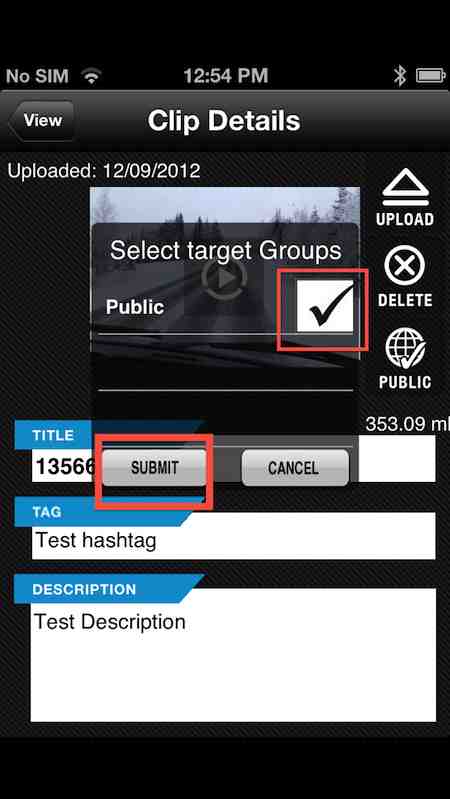

After tapping it, just check the Public checkbox. Don't be afraid if you upload a private video, this won't make its visibility public:

Then, just tap the Submit button, also annotated in the above screenshot.

The video uploads and, after a while, becomes available. You'll be e-mailed by the unique URL of your video. You can access it both through the link and by logging in in both a desktop and a mobile (including iOS) browser, by tapping the myTracks link in the top right corner. You can also quickly navigate to your public videos on the main map if their location is unique. For example, the following shot shows three videos shot here in Finland (all the three by me), after clicking the circle with the number in it:

2.1.1 Ubipix problems, bugs and some fixes

2.1.1.1 Disabling audio

Note that the Settings dialog has an audio switch (annotated by a green rectangle below):

While it's even disabled by default, it doesn't seem to have any effect on the upload. That is, there is no way to disable audio upload. The only way to get rid of the audio track is manually editing the original files (removing the audio track) and uploading the edited versions.

For this, I recommend MP4Tools (albeit other MOV editor tools should also work). Just make sure you rename the .mov extensions to .m4v before dragging the files to MP4Tools and, after deleting the audio tracks, rename the files back to .mov. Then, the files become unplayable on the device but not after upload. All the example videos below (see section 2.1.2) have been processed this way before upload this was the only way to remove private family member chat (albeit it was in Finnish so few would have understood it).

2.1.1.2 Other problems

- Compass errors can't be fixed by simply adding a constant bias (see below)

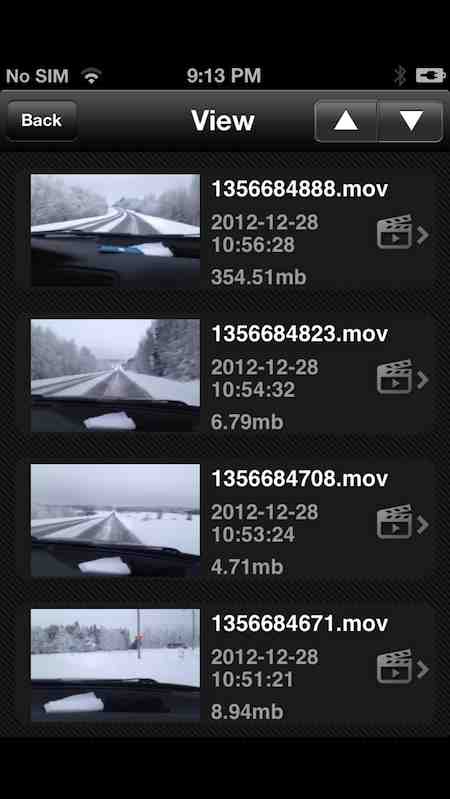

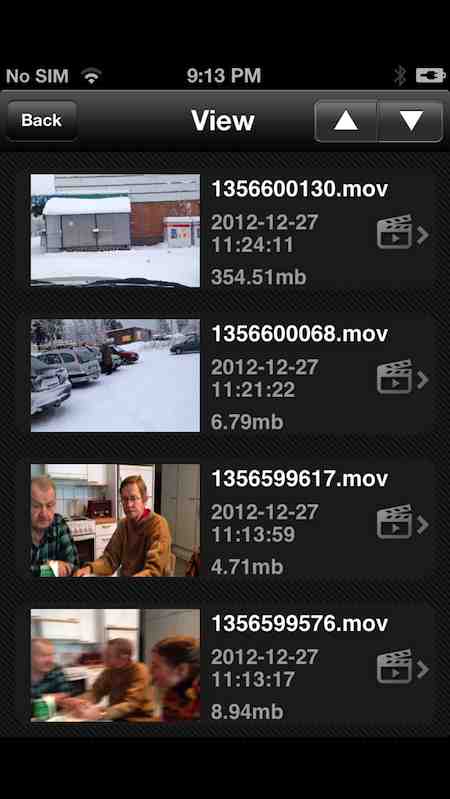

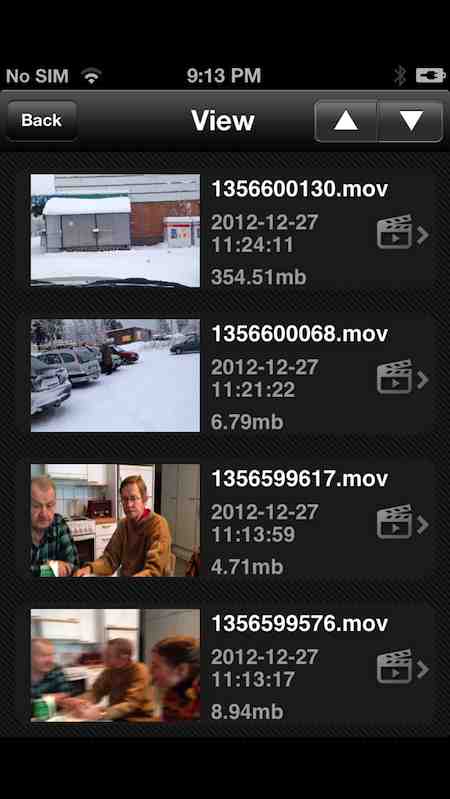

- Buggy iOS interface: with videos taking up more than one page (that is, with more than 5 videos), the filesize will be always the same on subsequent pages as on the ones on the first page. Some examples:

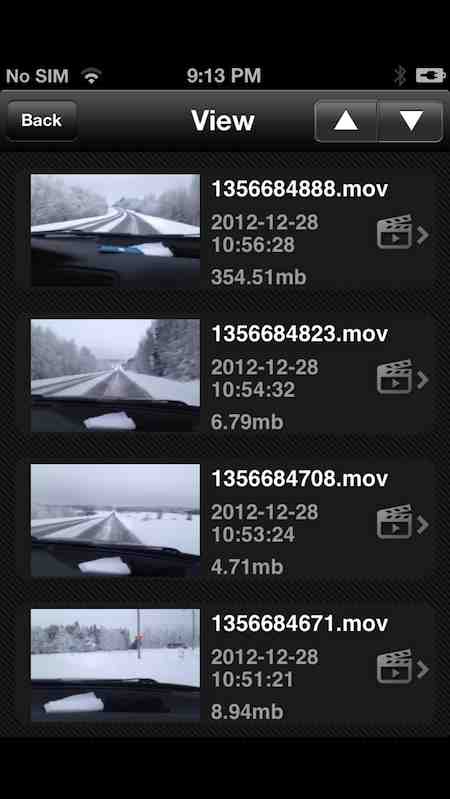

This is the first page of the list. Note the filesizes: 354.51, 6.79, 4.79, 8.94 Mbytes. Now, let's take a look at the second page (Ubipix organizes the videos by their shooting date):

The filenames and the shooting dates are correctly displayed, so are the thumbnails but the sizes aren't. They're exactly the same as on the first page: 354.51, 6.79, 4.79, 8.94 Mbytes.

The situation is the same on the third and fourth pages:

Note that there are other, display-related bugs. For example, the date of the previous upload is also incorrectly displayed (in the screenshots right in the uploading-specific 2.1 section, 12/09/2012).

- no desktop or local map rendering capabilities you'll always need to upload the video and watch the videos streamed from Ubipix's servers.

- there isn't any location-specific information (town / street names or even all locations shown on a map) in videos before uploading, unlike with the, in this respect, vastly superior GeoVid. (See 2.2 for more info on GeoVid.)

- limited recording time: 15 minutes. Note that, should you want to switch to the highest-quality (on iPhone 4S+es, 1080p) mode, it automatically switches back to 5 minutes. (You can always change this with the dedicated slider in Settings.)

- there's no way of downloading the video from Ubipix's servers as, say, an MP4 file. (Or, for that matter, in any other format.) This is unlike traditional video (or photo) sharing sites like Vimeo, YouTube etc. That is, should you want to archive your videos, you'll need to make use of, say, iExplorer to directly access the Documents/defaultFolder folder of the Ubifix app. (Needless to say, it's not possible to do this via iTunes as iTunes File Sharing isn't enabled by the app.)

2.1.2 Ubipix examples you can try right away

I've made three videos public so that you can check out how location / direction tracking works. They work just fine even without installing Ubifix on your iDevice. You'll want to use a desktop browser or an iPad for watching the examples definitely not an iPhone / iPod touch. (See the next, 2.1.3 section for the why's.) All these videos have been shot on my iPhone 5 here in Finland, Iisalmi.

In-town video

Note that this video shows a compass at least 90 degrees off in the default Digital compass mode; everything else OK. (Of course, switching to the GPS Bearing mode this is fixed but, then, the camera's current direction isn't at all shown. See section 5. for more links to discussions on the difference between bearing (perfectly computable from at least two pairs of simple GPS coordinates) and true, compass-based camera direction.)

A big problem with Ubipix is the lack of any way to define a constant bias, which would have definitely made the camera direction display right with this and the following video.

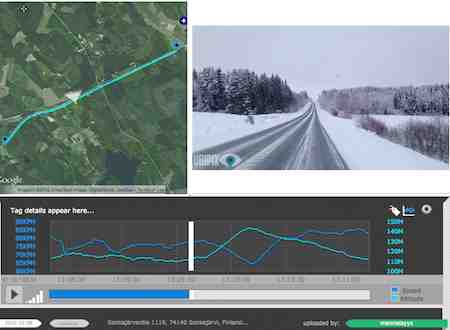

Outside the town

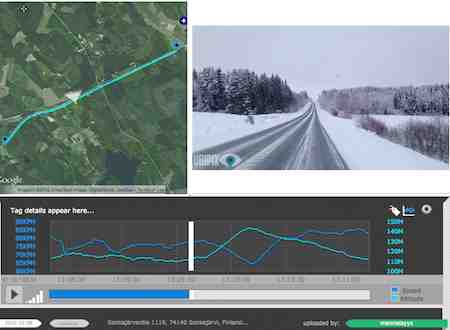

In this video, particularly the 110 → 150m uphill can nicely be seen on the bottom graph: both the altitude and the speed (the car shows down while climbing the uphill). The following shot shows the position just before the uphill:

Finally, let me present you a video where the compass did work properly (instead of in a car, it's shot outdoors):

HERE

This also shows that, even here in the North-east, compass is working OK.

(cont'd below, in the second post)

In the first chapter of the article, I explain why you can't do this with Apple's stock Camera app or for that matter, when compass recording is concerned, any decent(!) standalone camera out there.

In the second one, I show you two third-party apps for iOS that (are supposed to) do the trick. Should you only need an app for shooting with dynamic location / direction information, you can safely skip all the other chapters.

In the third chapter (Shooting with an external camera), I explain how iPhones (and 3G iPads) can be used as a GPS & compass tracker to be used together with an external camera. After listing and evaluating several GPS (but not compass!) trackers readily available in the AppStore, I present mine, along with source code(!), that does this including even compass tracking. If you in no way want to shoot with your iPhone for the reasons outlined in this chapter (narrow field-of-view or mono audio, for example) but would still use it as an excellent tracker, this chapter is for you.

Note that THIS article also explains the advantages of dynamic location / direction storing - you might want to read it before going on, should you not see the point in all this, based on my introduction above.

1. The problem

1.1 The problems with dedicated cameras

I've frequently posted information on cameras capable of storing GPS coordinates along with still shots (and videos). One of my 14-month-old list is in my well-known still photo geotagging tutorial.

Unfortunately, the situation hasn't changed much in the last 14 months. Still very few cameras have built-in GPS, let alone compass. Video support is even worse: the only cameras with continuous(!) GPS data recording are pretty restricted when it comes to overall image quality, with particular emphasis on low-light performance. Unfortunately, IQ-wise the best compact cameras with GPS like the Canon S100, aren't able to record continuous GPS data at all (as they aren't using AVCHD but restricted (see Section 1.2.1 below) simple MOV files). The GPS-equipped AVCHD models, already capable of dynamic location logging, in the Panasonic TZ / ZS series (for example, the ZS20) can't boast very good image quality. Finally, Sony's HX-series P&S cameras (the only ones that do have compasses in addition to GPS'es, both dynamically logged along videos), being compact superzooms, aren't really meant for enthusiasts, not even in their most recent reincarnations as their lens are pretty slow and sensors tiny (as with all compact superzooms, as opposed to true high-quality small enthusiast cameras like the Sony RX100, the Fujifilm XF1, the Panasonic LX series etc. - see THIS for more examples of these cameras).

Needless to say, the king of sports cameras, the GoPro series, don't even contain a GPS unit (let alone a compass), not even in their third reincarnation. The only dedicated sports camera model with GPS, the Contour+2, doesn't have compass either and is, generally, much less recommended than the GoPro series, particularly if you need easy and safe chest / head mountability particularly important to, say, skiers like me.

1.2 The problems with Apple's approach: a single GPS location + no compass info

iPhones starting with the 3GS, iPod touches with the 4th generation and all iPads all have compasses, in addition to all video recording-capable iPhones' and all 3G-enabled iPads having GPS. Unfortunately, these are not utilized when shooting videos. This, unless you either use third-party apps (like that of Ubipix, see below), means you'll never know where the iPhone was when a particular frame of a video was shot and to which direction it was pointed.

The inability to save GPS / compass information of iOS is because of several reasons. In the following subsections, I explain them all. Again, this info doesn't truly needed by people that only want to know what AppStore app to select to be able to dynamically geotag their videos. They can safely skip the remainder of this chapter and move straight to chapter 2.

1.2.1 Video container restrictions

I've very often explained why the MOV / MP4 / M4V container Apple only supports is inferior to more advanced ones like MKV or AVCHD. Then, I haven't mentioned AVCHD also supports variable, continuously changing location (and compass) info storing with every single(!) frame.

MOV's recorded by iDevices, unfortunately, only support one, global location tag. It's this value that QuickTime shows like in the following dialog (via Cmd + I):

(As with all the other images in the article, click for a much better-quality and larger one.)

It's worth pointing out that the, otherwise, great and highly recommended MediaInfo doesn't display this info:

Incidentally, there is something interesting here: the tagging time (11:11:53) is 12 seconds later than the recording/encoding date (11:11:41). This is because location data is not guaranteed to be present when starting recording the video it can be stored in the target MOV file when it becomes available. This also means the coordinates you find in a video shot on an iDevice aren't necessarily that of the starting point but may be several (in this particular case, 12) seconds into the video.

Physically, the GPS coordinates are at /moov[0]/meta[0]/ilst[0]/[2] as can be seen in the following screenshot shot with IsoViewer:

Not being associated with any particular frame, this also means there can be only one instance of this data for the entire video stream.

Programmatically setting the metadata property of AVCaptureMovieFileOutput (see section Adding Metadata to a File in the official AV Foundation Programming Guide) doesn't help either as it sets exactly this list element and not that of the currently recoded frame. That is, creating your own video recorder and continuously setting the metadata with the then-current GPS coordinates won't work. The MOV container (and Apple's limited API to manipulate it) simply doesn't support this.

1.2.2 What about the video framegrabs of the iPhone 5?

Unfortunately, not even the shots (technically, as opposed to more decent cameras like the GoPro 3 Black Edition, the Nikon System 1 cameras or newer Sony models delivering full-resolution still images, vastly inferior simple framegrabs) made by the iPhone 5 have dynamic location info. (They could as each of them does have a fully independent location tag.) That is, if you travel with a car while continuously shooting a video and, without stopping video recording, tap the still snapshot icon on your iPhone 5's screen, the 1920*1080 still shot stored will have the exact same location tag as that of the video that is, the location it was tagged. That is, these shots will not have the actual location of taking the shot itself.

2. Third-party solutions readily available on iOS

2.1 Ubipix

Without doubt the best application to record dynamically geotagged videos even with compass info is the free Ubipix (AppStore link).

Basically, when you record a video from inside the app, it'll also record all the location and camera direction info along with the video recording. These recordings are stored in the /Documents/defaultFolder folder of the app. Recording one video will result in three video files to be created: the video recording itself (say, 1356684671.mov), a textual file (.txt) containing all the location and compass info and, finally, a PNG framegrab of the video of the same resolution as the video itself. The video file isn't encrypted, which means you can edit it before uploading (a probably important thing, should you want to remove the audio track before making the video public). You can even shoot video in another, much more capable app allowing for a lot more features (torch, snapshots on the iPhone 5, separate exposure / focus carets, a lot of additional settings on jailbroken devices in the stock Camera app via CameraTweak etc.) of the current iPhone model (say, the built-in Camera app or third-party ones like FiLMiC Pro) and, then, manually transfer the video to this Ubipix folder for later upload. (To create a compatible .TXT file with all the location / compass info in Ubipix's own format, you'll need to modify my location logger discussed in Section 3.2.) Also, you can shoot a video with an external camera and, after converting (with AVCHD videos, just remuxing via iVI) its video to a MOV container (if it isn't already shot using that one), do the same before upload.

After finishing shooting (which, again, may involve a conversion with an external tool or even transferring videos from another camera), all you need to do log in to your free Ubipix account in the Settings dialog of the iOS client (annotated by a red rectangle below):

(an iPhone 5 shot; as you can see, the app doesn't make use of the full screen estate of the newer, 16:9 devices)

and, then, selecting the video to upload and tapping the upload icon in the upper right corner. If you want to keep the video private, you'll need to tap the Public icon (the third from the top) before uploading so that it becomes Private.

These two icons are both annotated in the following screenshot:

After tapping Upload, you'll need to select a target channel; currently, only one is available (Ubipix):

After tapping it, just check the Public checkbox. Don't be afraid if you upload a private video, this won't make its visibility public:

Then, just tap the Submit button, also annotated in the above screenshot.

The video uploads and, after a while, becomes available. You'll be e-mailed by the unique URL of your video. You can access it both through the link and by logging in in both a desktop and a mobile (including iOS) browser, by tapping the myTracks link in the top right corner. You can also quickly navigate to your public videos on the main map if their location is unique. For example, the following shot shows three videos shot here in Finland (all the three by me), after clicking the circle with the number in it:

2.1.1 Ubipix problems, bugs and some fixes

2.1.1.1 Disabling audio

Note that the Settings dialog has an audio switch (annotated by a green rectangle below):

While it's even disabled by default, it doesn't seem to have any effect on the upload. That is, there is no way to disable audio upload. The only way to get rid of the audio track is manually editing the original files (removing the audio track) and uploading the edited versions.

For this, I recommend MP4Tools (albeit other MOV editor tools should also work). Just make sure you rename the .mov extensions to .m4v before dragging the files to MP4Tools and, after deleting the audio tracks, rename the files back to .mov. Then, the files become unplayable on the device but not after upload. All the example videos below (see section 2.1.2) have been processed this way before upload this was the only way to remove private family member chat (albeit it was in Finnish so few would have understood it).

2.1.1.2 Other problems

- Compass errors can't be fixed by simply adding a constant bias (see below)

- Buggy iOS interface: with videos taking up more than one page (that is, with more than 5 videos), the filesize will be always the same on subsequent pages as on the ones on the first page. Some examples:

This is the first page of the list. Note the filesizes: 354.51, 6.79, 4.79, 8.94 Mbytes. Now, let's take a look at the second page (Ubipix organizes the videos by their shooting date):

The filenames and the shooting dates are correctly displayed, so are the thumbnails but the sizes aren't. They're exactly the same as on the first page: 354.51, 6.79, 4.79, 8.94 Mbytes.

The situation is the same on the third and fourth pages:

Note that there are other, display-related bugs. For example, the date of the previous upload is also incorrectly displayed (in the screenshots right in the uploading-specific 2.1 section, 12/09/2012).

- no desktop or local map rendering capabilities you'll always need to upload the video and watch the videos streamed from Ubipix's servers.

- there isn't any location-specific information (town / street names or even all locations shown on a map) in videos before uploading, unlike with the, in this respect, vastly superior GeoVid. (See 2.2 for more info on GeoVid.)

- limited recording time: 15 minutes. Note that, should you want to switch to the highest-quality (on iPhone 4S+es, 1080p) mode, it automatically switches back to 5 minutes. (You can always change this with the dedicated slider in Settings.)

- there's no way of downloading the video from Ubipix's servers as, say, an MP4 file. (Or, for that matter, in any other format.) This is unlike traditional video (or photo) sharing sites like Vimeo, YouTube etc. That is, should you want to archive your videos, you'll need to make use of, say, iExplorer to directly access the Documents/defaultFolder folder of the Ubifix app. (Needless to say, it's not possible to do this via iTunes as iTunes File Sharing isn't enabled by the app.)

2.1.2 Ubipix examples you can try right away

I've made three videos public so that you can check out how location / direction tracking works. They work just fine even without installing Ubifix on your iDevice. You'll want to use a desktop browser or an iPad for watching the examples definitely not an iPhone / iPod touch. (See the next, 2.1.3 section for the why's.) All these videos have been shot on my iPhone 5 here in Finland, Iisalmi.

In-town video

Note that this video shows a compass at least 90 degrees off in the default Digital compass mode; everything else OK. (Of course, switching to the GPS Bearing mode this is fixed but, then, the camera's current direction isn't at all shown. See section 5. for more links to discussions on the difference between bearing (perfectly computable from at least two pairs of simple GPS coordinates) and true, compass-based camera direction.)

A big problem with Ubipix is the lack of any way to define a constant bias, which would have definitely made the camera direction display right with this and the following video.

Outside the town

In this video, particularly the 110 → 150m uphill can nicely be seen on the bottom graph: both the altitude and the speed (the car shows down while climbing the uphill). The following shot shows the position just before the uphill:

Finally, let me present you a video where the compass did work properly (instead of in a car, it's shot outdoors):

HERE

This also shows that, even here in the North-east, compass is working OK.

(cont'd below, in the second post)