Mac: M3 - *Hardware accelerated RT (Part 1)

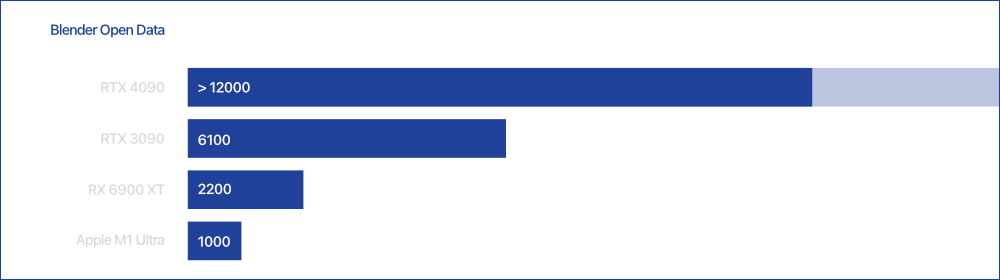

All Apple needs to do is make the GPU in the Mac Pro 12x faster than the GPU in the M1 Ultra to compete with RTX 4090. Piece of cake. … and more seriously - I think we can expect the Radeon RX7000 in the Mac Pro.